A new engineer is debugging a customer support ticket: “order #4421 shows up as ‘Failed’ in the admin tool but the customer received it.” She opens the orders table in DataGrip and finds status TINYINT NOT NULL. The admin tool displays “Failed” when status = 2. The fulfillment service ships when status = 3. The reporting view treats status = 1 as “active.” None of the three definitions are in the schema, and nobody on the team remembers the original mapping; the engineer who designed the table left eight months ago.

Resolving the ticket takes ninety minutes: grep three service codebases, find three different mappings, reconcile them against the actual row’s status = 2, draft the customer email. Every part of that work happens because the integer values aren’t grounded anywhere the database knows about, and the code’s three guesses disagree. The mechanism that would have grounded them in the catalog has existed in every major database engine since the 1990s. The team has just never written it down.

What schema comments are

Schema comments are metadata strings attached directly to database objects: tables, columns, indexes, views. They’re stored in the database catalog and exposed through standard metadata queries.

In PostgreSQL:

| |

In MySQL:

| |

SQL Server uses extended properties, Oracle uses COMMENT ON. The syntax varies. The concept is universal.

Where comments show up

This is the part that makes comments more useful than a wiki page or a README. Because they live in the catalog, they propagate automatically to every tool that reads schema metadata.

Schema dumps. pg_dump and mysqldump include comments in the output. Anyone restoring a backup or reviewing a migration gets the context without looking elsewhere.

ER diagram tools. DBeaver, DataGrip, pgAdmin, MySQL Workbench all render column comments in schema viewers and diagrams. Hover over a column and the description is right there.

information_schema and catalog queries. Any script, tool, or automation that queries metadata picks up comments for free.

In PostgreSQL:

| |

In MySQL:

| |

ORM introspection. Many ORMs and code generators that reverse-engineer models from databases will pull comments into generated code as docstrings or annotations.

Monitoring and alerting tools. When an alert fires about a table or column, the comment provides immediate context without requiring someone to look up external documentation.

CI pipelines and schema validation. Linting tools can check whether new tables and columns have comments, the same way code linters check for function docstrings.

The point is that comments flow through the entire toolchain. You write them once, in one place, and every tool that reads the schema benefits, without configuration, without plugins, without maintaining anything separately.

What goes wrong without them

The absence of comments creates a category of problems that compounds over time. Onboarding takes longer than it should: every new engineer who encounters status TINYINT has to ask someone or investigate. Multiply that by every ambiguous column in every table across every service, and it stops being a one-time cost. It’s paid every time someone new touches the schema.

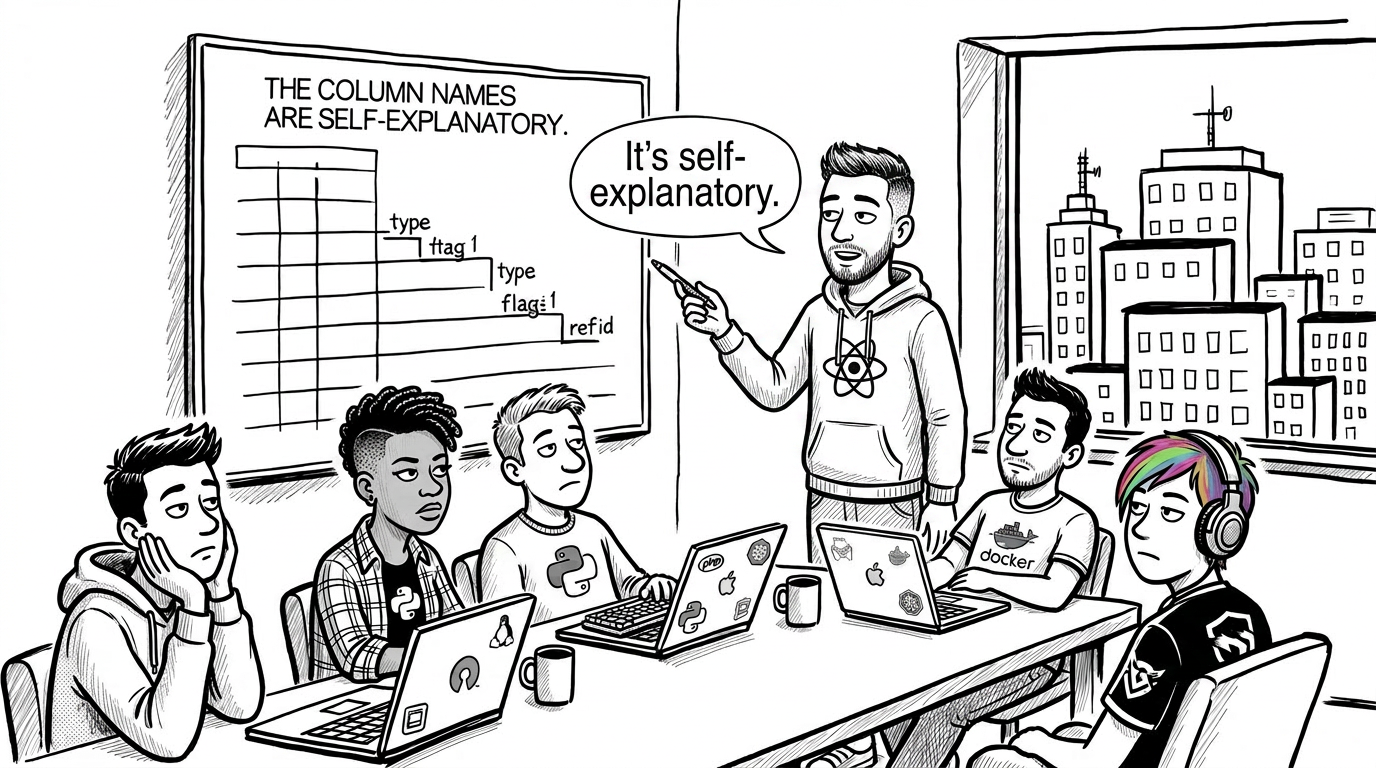

Debugging becomes archaeology. When something breaks at 2am and you’re looking at a table with columns named type, flag, ref_id, and config, all with no comments, you’re not debugging. You’re reverse-engineering institutional knowledge that should have been written down.

Schema reviews lose context too. A migration that adds is_processed TINYINT(1) DEFAULT 0 looks fine syntactically; processed by what, when, and is it idempotent? A comment turns the review from “does this look right?” into “does this match what we agreed on?”

External documentation drifts the moment someone adds a column or changes a status code without updating the doc. Comments live in the schema itself. They move with ALTER TABLE. They show up in every dump. They can’t be in a different repo than the data they describe.

What to comment

Not everything needs a comment. A column called created_at TIMESTAMP NOT NULL is self-documenting. Focus on the columns where the schema doesn’t tell the whole story:

- Status and type columns. What do the values mean?

1=active, 2=suspended, 3=closed. - Nullable columns where NULL has meaning. Does NULL mean “not set,” “not applicable,” or “ongoing”?

- ID columns that reference other tables without foreign keys.

owner_id BIGINT COMMENT 'References users.id'. - Columns with non-obvious units.

total_centsvstotal(dollars? cents? units?),duration(seconds? milliseconds? minutes?). - Columns with business logic encoded in values.

plan_type TINYINT COMMENT '1=free, 2=starter, 3=pro, 4=enterprise'. - Tables themselves. What does this table represent? One row per what?

A good table comment answers: “What is one row in this table?” A good column comment answers: “What does this value mean when I see it in a query result?”

Adding comments to an existing schema

This is the part that makes the cost-benefit ratio hard to argue against.

In PostgreSQL, COMMENT ON is a catalog-only operation. It takes no locks on the table. It doesn’t rewrite data. It doesn’t block reads or writes. On a table with 500 million rows, it completes in milliseconds.

| |

In MySQL, ALTER TABLE ... MODIFY COLUMN with a comment is a metadata-only change in most cases with InnoDB online DDL, but behavior depends on the version and what else is in the MODIFY. For comment-only changes on MySQL 8.0+, the ALGORITHM=INSTANT path applies. On older versions or when combined with type changes, it may trigger a table rebuild.

| |

COMMENT ON is a catalog-only update: no table lock, no rewrite, completes in milliseconds even on tables with hundreds of millions of rows. In MySQL 8.0+, comment-only changes go through the ALGORITHM=INSTANT path. This is about as safe as database changes get.The risk profile is as close to zero as database changes get. There’s no reason not to do this incrementally; comment a few columns every time you touch a table. Over time, the schema becomes self-documenting.

Generating documentation from comments

Because comments live in the catalog, tools can extract them and produce browsable documentation automatically. A few worth knowing:

SchemaSpy. Java-based, generates interactive HTML with ER diagrams. Reads table and column comments from the catalog. Run it against your database and you get a full documentation site with relationships, comments, and diagrams; no manual authoring.

tbls. A single Go binary, CI-friendly. Outputs Markdown, PlantUML, or SVG. Reads comments directly from the schema. Designed to run in pipelines: generate docs on every migration, commit them to the repo, and they stay in sync.

DataGrip / DBeaver. Not doc generators per se, but both render column comments inline in their schema browsers. For teams already using these, comments become immediately visible without any extra tooling.

The pattern is the same across all of them: comments in the schema become descriptions in the output. No separate documentation source to maintain. The schema is the source.

For teams that want generated docs as part of CI, tbls is the lowest-friction option: add it to your pipeline, point it at the database, and commit the Markdown output. Every migration that adds or changes a comment updates the docs automatically.

The RAG surface most teams forget

Schema-reading assistants (Copilot, MCP-backed agents, text-to-SQL tools, retrieval-augmented coding models) start with the same catalog every human does: information_schema, pg_description, \d+. If the catalog contains only column names and types, that’s the context the model gets. A column named status TINYINT is ambiguous to the model for the same reason it’s ambiguous to a new engineer, except the model won’t ping the on-call channel; it will generate a plausible query and hand it back. Published studies on text-to-SQL accuracy have put the lift from adding column-level semantic descriptions as high as ~27%, not because models are bad at reading schemas, but because most schemas don’t tell them enough to read.

Comments are the one catalog field that can carry business meaning. Every other metadata row is mechanical: type, nullability, length, constraint name. A comment on orders.status ('1=pending, 2=processing, 3=shipped, 4=delivered, 5=cancelled') turns a blind guess into a grounded answer for any tool that reads the catalog, human or otherwise. It’s the cheapest RAG context a team can ship: no vector store, no separate doc pipeline, no sync problem; the description travels with the column it describes. If the team is rolling out database-aware AI assistants and hasn’t commented the ambiguous columns first, the assistants are working from less context than a new hire would get on day one.

Making it stick

The challenge isn’t the mechanism, it’s the habit. Comments rot just like any other documentation if they’re not maintained. A few things help:

Comment at creation time. If the comment is part of the CREATE TABLE or migration, it happens naturally. Retrofitting is always harder than including it from the start.

Add it to your migration template. If your team uses a migration tool, add comment fields to the template. Make the absence visible rather than the default.

Lint for it. A simple CI check can flag tables or columns without comments. It doesn’t have to block merges - even a warning changes behavior over time.

Treat comments as part of the schema, not as documentation. When a column’s semantics change, the comment changes in the same migration. Same PR, same review.

The trade-offs

Comments aren’t a substitute for all documentation. They’re good at describing what a column or table is, not how a multi-table workflow operates. System-level documentation (data flow diagrams, service interaction maps, runbooks) still belongs somewhere else.

'1=active, 2=inactive' when the code now also uses 3=suspended will send someone down the wrong path. Treat comment updates as part of the migration, not a follow-up task.There’s also a maintenance cost. Stale comments are worse than no comments because they actively mislead. A column that says '1=active, 2=inactive' when the code was updated to also use 3=suspended will send someone down the wrong path. The mitigation is treating comments as schema, not as a nice-to-have; they change when the schema changes.

For teams with hundreds of tables and thousands of columns, retrofitting comments is a slow process. It’s not a weekend project. It’s an incremental habit that pays off over months.

Where to start

Start with the columns that make people ask questions. The status column where 0/1/2 means something nobody can quote from memory. The nullable date that means “ongoing” in one place and “missing” in another. The foreign key with no foreign key. Those are the columns where a one-line COMMENT ON recovers more institutional knowledge per character than any other change a schema can absorb.