created_at, and let a background service manage boundaries based on observed growth. Queries keep using the keys they already have, partition pruning works automatically, and the partition column never leaks into application code. The same “service watches and adjusts” pattern applies to hash and list partitioning with different operations.The orders dashboard started loading slowly the week after the partitioning deploy, and the team’s first instinct is to blame the new index strategy. The actual culprit shows up in EXPLAIN: thirty-six lines of Partitions: orders_p2025_01, orders_p2025_02, ... on a query that’s just SELECT * FROM orders WHERE id = 12345. The plan reads every partition because the WHERE clause doesn’t include created_at, and created_at is the partition key. The lookup that used to be one index probe is now thirty-six.

The proposed fix is the one that always gets proposed: add created_at >= '2024-11-01' to the dashboard query. It works. The plan drops to one partition. Then the audit page does the same thing, then the admin tool, then the migration script. Three months later there’s an internal lint rule that flags any SELECT FROM orders without a date filter, and code reviews include “did you add the partition filter?” as a standard check. The partition key has stopped being a storage decision and become a contract every query has to honor. Forgetting still produces no error. Just slowness.

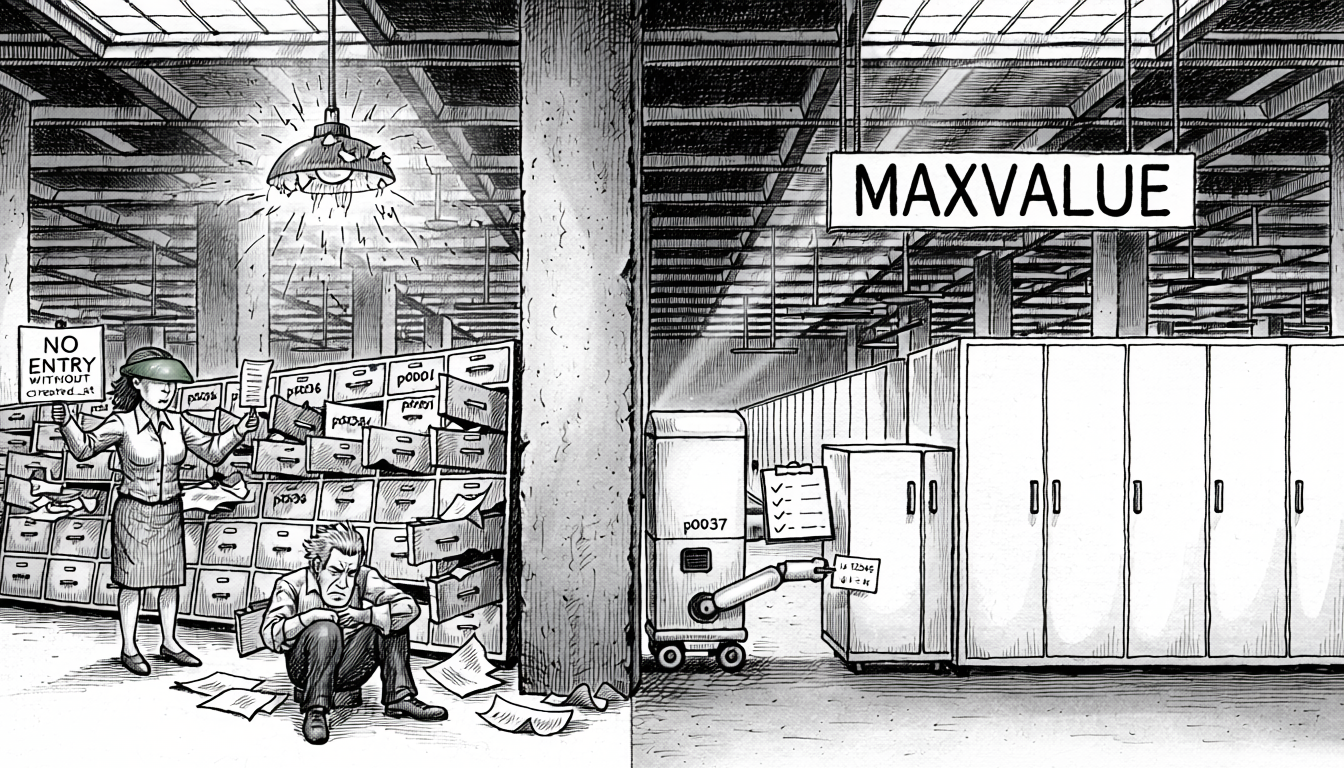

The partition key problem

Both PostgreSQL and MySQL require the partition key to be part of any primary key or unique constraint on the table. That rule exists for correctness: if the primary key didn’t include the partition key, the database couldn’t enforce uniqueness without scanning every partition.

The consequence is that if you want to partition by created_at, you can’t just have PRIMARY KEY (id) anymore. You need PRIMARY KEY (id, created_at). The date column is now part of the primary key whether your application needed it to be or not.

The more subtle cost is that id is no longer unique in the eyes of the database. Uniqueness is enforced on the tuple (id, created_at): the database will cheerfully accept two rows with the same id as long as they have different timestamps. The application probably still treats id as unique, but nothing in the schema guarantees it. And you can’t recover the guarantee with a separate UNIQUE (id) constraint: both MySQL and PostgreSQL require every unique constraint on a partitioned table to include the partition key columns. The uniqueness property has effectively been traded away.

This isn’t purely cosmetic; it changes the query plans the optimizer is willing to generate:

- With

PRIMARY KEY (id),WHERE id = 1is a constant-time lookup. MySQL’s EXPLAIN shows this as theconstaccess type; the optimizer knows exactly one row matches and the executor stops after finding it. Joins onidareeq_ref, the fastest join access type. - With

PRIMARY KEY (id, created_at), the same query becomes areflookup: a prefix scan on the leftmost index column that could, as far as the database is concerned, return multiple rows. Joins that used to beeq_refbecomeref. Cardinality estimates fall back to index statistics instead of the guaranteed “one row” assumption, which can push the optimizer toward worse plans further up the query tree.

To get the old const plan back, every lookup has to spell out the full primary key:

| |

That’s the same leakage as partition pruning, from a different angle: the partition key has forced its way into queries that had nothing to do with dates, first to get pruning and now to get single-row access.

| |

At this point everything still works. The table accepts inserts, queries return correct results, and the partition boundaries exist. The problem shows up the first time someone runs a query that doesn’t include created_at in the WHERE clause.

Partition pruning only works if you ask for it

Partition pruning is the optimization that makes partitioning worth doing. When a query’s WHERE clause restricts the partition key, the database can skip partitions that can’t possibly match. A query for last week’s orders only reads the one or two partitions that contain last week’s data.

That optimization depends on the partition key appearing in the WHERE clause. A query that filters on anything else doesn’t get pruned; it scans every partition.

| |

The first query is the kind of lookup that happens constantly: fetch an order by its primary key. On a non-partitioned table, it’s a single index seek. On a partitioned table where the pruning key isn’t in the WHERE clause, it’s a separate index probe against every partition: 36 index lookups instead of one. Still fast in absolute terms, but much worse than the non-partitioned version, which is the opposite of why partitioning was introduced.

The “fix” teams usually land on is to add the partition key to every query that touches the table. That’s a leaky abstraction. A storage decision is now a contract with every caller: new code has to remember the partition filter, old code has to be audited, the ORM has to be configured around it.

EXPLAIN that nobody reads until a dashboard times out. Most teams discover the failure by reviewing slow-query logs after a partition deploy, not from anything the database surfaces during query execution.Static partition boundaries don’t age well

The other thing that tends to go wrong is hardcoding partition boundaries at table creation time. The initial layout reflects whatever the team’s growth projection looked like at that moment. Six months later the traffic pattern has changed, some partitions are 10x larger than others, and the p_future catch-all partition is holding half the table.

| |

Manually splitting and rebalancing partitions is operational work nobody wants to own. It requires scheduling maintenance windows, running ALTER TABLE ... REORGANIZE PARTITION against tables that might be hundreds of gigabytes, coordinating with application teams, and not making a mistake. It tends not to happen until there’s a performance incident, and at that point the fix is expensive.

The shape of the better approach

The primary key already exists. For tables using BIGINT AUTO_INCREMENT, it’s monotonically increasing: newer rows have larger IDs. That’s the property range partitioning needs. The primary key is the partition key.

| |

Every query that filters by id (which is most of them) gets partition pruning for free, with no changes to application code. Range queries by ID prune across a small number of partitions. Point lookups prune to exactly one. The primary key is already in every WHERE clause that matters, because it’s the primary key.

The trade-off is that partition boundaries aren’t directly defined by time anymore, which looks like it breaks time-based retention. In practice this is less of a trade-off than it looks; the point of partitioning often isn’t retention but keeping index sizes manageable, making maintenance operations cheap, and bounding the blast radius of a bad query. When retention is a goal, boundaries can still be chosen to align with time. They just get picked at DDL time by the partitioner service, rather than baked into the schema. See Time-aligned boundaries without a date in the key.

Automating range partition management

Everything up to this point assumes range partitioning: partitions defined by continuous boundaries on an ordered value (an ID range, a date range). The operational work is mechanical: watch the active partition fill up, split the MAXVALUE catch-all into a new bounded partition before that happens, and drop partitions that have fallen past the retention threshold. A small service running on a schedule is enough to keep the layout healthy. The hard part isn’t the logic, it’s doing it safely: running DDL against a large table without locking out writes, handling partial failures, and recovering cleanly if the service crashes mid-operation.

| |

REORGANIZE PARTITION on an empty catch-all partition is fast; there’s nothing to move. If you split the catch-all before any rows land above the split point, the operation is metadata-only. The service’s job is to stay ahead of the write workload: split the catch-all when it’s still small or empty, not when it’s already holding hundreds of millions of rows.

REORGANIZE, surviving a service crash mid-DDL (idempotency on retry), handling concurrent migration tooling that’s also taking ACCESS EXCLUSIVE on the table, and having a clear runbook for “the service has stalled and the catch-all is now 200M rows, what do we do.” Production-grade partitioners usually spend more code on the operations bracket than on the DDL itself.There’s no single right target; it depends on what’s driving the partitioning in the first place. If the goal is keeping the OLTP working set small via retention, the boundary spacing is a business decision: how long does the data need to stay queryable in the hot store, one year, seven years, somewhere in between. If the goal is performance, sizing each partition so its indexes fit comfortably in memory is a reasonable rule of thumb, provided there’s no significant key skew concentrating reads or writes on a single partition. The service can be configured against either target and adjust boundary spacing based on observed growth.

Time-aligned boundaries without a date in the key

Partitioning by id doesn’t mean giving up time-based boundaries; it just means choosing them after the fact. The service can run a single query against the live table to find the ID boundary for any point in time:

| |

That value becomes the upper bound for the next bounded partition. The catch-all stays above it, and future partitions get cut at time-aligned ID boundaries:

| |

The resulting partition p2026_03 contains roughly all orders from March 2026, but created_at never appears in the primary key, never needs to be in any WHERE clause to get pruning, and never leaks into application code. The date column is used once, at boundary-creation time, by the service running the DDL. Queries continue to filter by id and get pruning for free.

Retention works the same way. To drop data older than twelve months, the service runs SELECT MAX(id) FROM orders WHERE created_at < NOW() - INTERVAL 12 MONTH, identifies every partition with an upper bound below that ID, and drops them. The MAXVALUE catch-all is what makes this pattern work; there’s always a place for new rows to land while the service is deciding where to cut next.

What the service looks like

The service itself is small. It runs on a schedule (hourly for high-throughput tables, daily for slower-moving ones) and on each tick it does a handful of things:

- Inventory. Read the current partition layout from the catalog: partition names, upper bounds, and approximate row counts.

- Sizing check. Look at the active partition (the bounded one just below the catch-all). If it’s filled past a configured threshold of the target size, it’s time to cut the next boundary.

- Boundary selection. Pick where to cut. For time-aligned partitions, query the live table for the ID that was current at the next month boundary; that ID becomes the upper bound of the new partition.

- Split. Reorganize the catch-all into a new bounded partition plus a fresh catch-all above it. As long as the catch-all is still empty when the split runs, this is metadata-only.

- Retention pruning. Translate the retention window (e.g. twelve months) into an ID via the same

created_at-to-idlookup, then drop any partition whose upper bound sits below that ID. - Concurrency guard. A single advisory lock or leader election so two instances don’t run DDL against the same table simultaneously.

- Metrics and alerting. Per-partition size and row count, time-since-last-tick, and a clear alert if the active partition starts filling faster than the service is splitting ahead of it.

Run it as a cron job, a Kubernetes CronJob, or a tiny always-on worker; the operational footprint is intentionally small. The bulk of the production-readiness work goes into the concurrency guard, naming conventions, and handling of split failures from lock contention or concurrent DDL. None of that changes what the service does on each tick: a few catalog reads and one DDL statement.

Existing tools and how this pattern differs

The automation itself isn’t new; several tools already manage partition boundaries on a schedule. What differs across approaches is which column the partitioning is done on, and how much of the schema contract that choice locks in.

pg_partman is the widely used partition manager in the PostgreSQL ecosystem. It pre-creates future partitions on a schedule, drops old ones against a retention window, and can migrate non-partitioned tables into partitioned ones in place. Its defaults (and most tutorials written on top of it) assume time-based range partitioning on a timestamp column. That’s the pattern earlier sections argue against: the date column ends up in the primary key and leaks into every query that expects pruning.

TimescaleDB goes further. Its “hypertables” are automatically time-partitioned PostgreSQL tables, with chunk creation, retention, and compression all managed by the extension. It’s the right tool for workloads where every query is genuinely time-scoped: observability, IoT telemetry, append-only event streams. It’s a worse fit for OLTP tables where some queries are time-scoped and others aren’t, because the time column is mandatory and every non-time query pays the same partition-key-in-the-WHERE-clause tax as manual time partitioning.

Vitess includes partition management as part of its broader MySQL sharding solution. Its partitioning conventions are flexible, but most production uses land on the same time-based defaults.

The common thread across all three: the automation layer assumes the partition key is picked in advance (usually a time column) and manages boundaries on top of that assumption.

The approach in this post keeps the automation pattern (small service, pre-split the catch-all, drop behind retention) but changes the key choice. The partition key is the primary key, and time alignment is derived at DDL time via the SELECT MAX(id) WHERE created_at < X lookup. The schema-level contract stays PRIMARY KEY (id); time-based retention still works, computed once per boundary instead of baked into every query.

The trade-off is owning the service. pg_partman is a well-tested extension; a DIY partitioner is real operational surface area: advisory locks, failure recovery, metrics, alerts. The useful question is whether created_at is already a natural filter in every query that matters. If every query is time-scoped by design (observability, telemetry, audit logs, anything time-series) pg_partman or TimescaleDB against a time column is the right answer. The partition key isn’t leaking because it was already there.

If the workload is mixed (some queries filter by date, most don’t) then adding created_at to the PK forces a choice: retrofit every non-time query with a date filter, or eat full partition scans on point lookups. Either option is the overhead, whether it’s already visible as slow queries or just spreading through the codebase as AND created_at >= ? clauses added “for partitioning reasons” on queries that have nothing to do with dates. At that point, owning a small DDL service is cheaper than propagating a partition key through every caller forever.

Automating hash and list partitioning

The same “service watches and adjusts” idea transfers to other partitioning strategies, but the operations change.

Hash partitioning distributes rows across a fixed number of partitions via a hash function. With good cardinality, every partition grows at roughly the same rate; there’s nothing to split based on growth. What there is to monitor is skew: a low-cardinality column or a hot key produces one partition that grows faster than the others, which is the failure mode partitioning was supposed to prevent.

Automation here isn’t about adjusting boundaries. Changing the hash partition count rebuilds the entire table, which isn’t something a background service should trigger. The useful work is detection: track per-partition size and growth, surface skew early, run OPTIMIZE or VACUUM FULL on partitions as they bloat. The service flags problems; a human decides whether to reshape the table. The consequence is that hash partition count is a decision to get right the first time. Over-provisioning (64 partitions when 16 would do today) is cheap insurance against a later full rebuild.

List partitioning maps enumerated values to specific partitions: one partition per region, per significant tenant, etc., with the long tail in a DEFAULT catch-all. The automation problem is partition promotion: when a value in the catch-all grows large enough to deserve its own partition.

| |

Promotion is more expensive than splitting an empty range catch-all (the rows for that tenant have to physically move out of DEFAULT into the new partition) but it can be batched and scheduled during low-traffic windows. Dormant values can be merged back into DEFAULT in the reverse direction to keep the partition count bounded.

What actually belongs in the partition key

The question worth asking before adopting any partitioning scheme: what column is already in every query that matters? In most OLTP systems the answer is the primary key. It sits in every lookup, every join, every foreign-key fetch, so partitioning by it gets pruning for free. The other answers are real but narrower. tenant_id works in a multi-tenant system if every query is tenant-scoped; a date column works if the workload is time-series and every query already filters by date. When those conditions don’t hold, the partition key leaks into application code the first time someone writes a query without it.

The failure mode is partitioning by a column that isn’t already in every query, then retrofitting the query layer to add it. That’s the path that ends with AND created_at >= ? stapled onto queries that have nothing to do with dates, just to avoid a 36-partition scan.