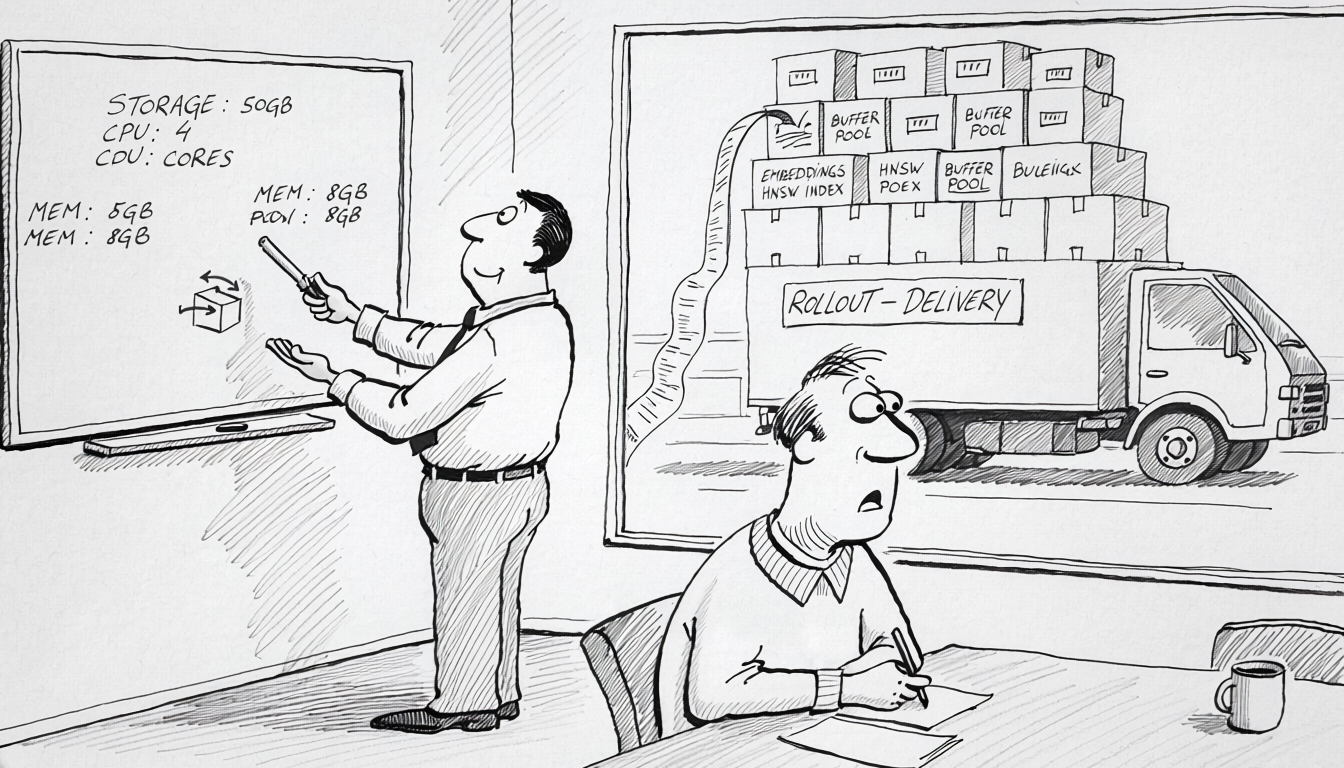

A pgvector user opened issue #666 in September 2024. They had one million records, 512-dimensional vectors, an HNSW index. Cold-cache search took 83 seconds. Warm cache, the same query returned in roughly 100 milliseconds. Three orders of magnitude. The index had not grown. The query had not changed. What changed was that other applications running on the same Postgres preempted the vector index pages out of cache, and the next search read the entire HNSW graph back from disk one block at a time. The capacity plan that approved the AI feature six weeks earlier had a line for storage and a line for CPU. It did not have a line for the OLTP buffer pool quietly fighting the vector index for residency, and losing.

The senior reader’s first response is “throw a bigger instance at it” or “stop running this on the OLTP Postgres and use a dedicated vector DB”. Both are right answers in some configurations. A bigger instance buys headroom for the working set without changing the slope of the cost curve as the corpus grows. A dedicated vector store removes the cache-pollution problem and adds a network hop, a second consistency model, and another piece of infrastructure to back up. The choice being made is between paying the cost on the OLTP Postgres in the form of a bigger instance and worse p95s, or paying it on a second system in the form of more operational surface area. The conversation that didn’t happen is the one about what the cost actually consists of and where it accrues. That is what the rest of this article is.

The four cost categories

Every pgvector vector row takes 4 * dimensions + 8 bytes. A 1536-dimensional embedding from text-embedding-3-small lands at 6,152 bytes. A 50-million-row table that occupied 40 GB on its existing columns becomes 350 GB after the embedding column is added, before any index. Supabase published a case study in August 2023 on 224,482 embeddings where Postgres RAM consumption went from 4 GB on 384-dimensional vectors to 7.5 GB on 1536-dimensional vectors, on the same hardware and the same row count. The dimensions alone were the difference.

Vector index builds cost CPU at a scale that breaks the assumptions of any normal migration window. Jonathan Katz benchmarked HNSW build on the dbpedia 1M corpus across pgvector versions: 7,479 seconds on 0.5.0, 250 seconds on 0.7.0 with the parallel-build improvements, 49 seconds with binary quantization. AWS published Aurora numbers on a 5M OpenAI dataset showing 29,752 seconds on 0.5.1 versus 445 seconds on 0.7.0 with binary quantization. Versions matter. They also bound how bad it can get on older versions. pgvector issue #300 reports a 24-plus-hour HNSW build on 10M rows of 768-dimensional vectors with 4 CPU and 16 GB RAM. Issue #807 reports the connection dropping after roughly two hours of an HNSW build on 17M rows of 1536-dimensional vectors with 48 CPU and 192 GB RAM. The build is the part of the workload least visible to the production dashboard and most painful to retry.

HNSW does not degrade gracefully when its working set exceeds RAM. The graph is designed to be traversed in random order, which is fast when every page is in shared_buffers and falls off a cliff when it isn’t. pgvector issue #844 caught the in-build version of the same problem: the user got the message hnsw graph no longer fits into maintenance_work_mem after 5908085 tuples, and the build slowed dramatically from that tuple onward. The query-side equivalent has the same shape. Crunchy Data’s HNSW write-up reports index sizes of roughly 8 GB per million rows on typical AI embeddings. Neon’s operational guide recommends keeping maintenance_work_mem at no more than 50–60% of available RAM for vector workloads. The exact latency-vs-RAM curve past the cliff is not published in any vendor source I can find. The shape is well-known to anyone who has watched it happen, and the absence of a published curve is itself a sign of how much of this knowledge lives in incident channels rather than docs.

The cache problem is the inverse of the build problem. Once the index exists, every ANN query touches thousands of pages chased through a graph traversal. A handful of vector queries running concurrently with OLTP traffic is enough to evict the heap pages the OLTP queries depend on, and the OLTP p95 regresses without any change to OLTP code. The pgvector #666 numbers from the opening are the cleanest single data point on this. The instructive piece is the magnitude. Not 2x worse, not 5x worse. Three orders of magnitude depending on cache state. There is no other workload class on a typical OLTP Postgres that produces that swing.

All four cost categories converge on the managed-service bill. Aurora storage runs $0.10 per GB-month at the base tier. 100 million 1536-dimensional full-precision vectors require roughly 6.15 GB raw per million rows, plus an HNSW index closer to 8 GB per million on typical configurations. That is about 1.4 TB before backups, replicas, or growth, around $140/month in raw storage at the base rate. Storage is the floor, not the cost. The cost is the instance class needed to keep the active portion of that index in shared_buffers, which on the memory-cliff curve above means an instance one or two tiers above what the rest of the workload required.

The two events the capacity plan didn’t budget for

Embedding model upgrades rewrite the embedding column. text-embedding-3-small was released January 2024 alongside text-embedding-3-large, and any team that wanted the better recall on the larger model also wanted to re-embed the existing corpus. The migration is a full rewrite of the largest column on the largest table, plus an HNSW index rebuild on the new vectors, plus the API call cost of generating the new embeddings, plus double storage during cutover unless the team is willing to take a recall regression by deleting the old embeddings before the new ones are validated. There is no published postmortem from a named company giving real numbers on this event. The closest public signal is pgvector issue #559 from December 2024, where a user reports that individual inserts on a 1M-row HNSW table went from millisecond-scale before the index existed to “5–8s” afterward. The same write amplification applies to the migration, except now it applies to every row at once. The absence of postmortems is itself worth noticing. The event is recent enough that most teams haven’t lived through their second model upgrade yet.

The other unpriced event is connection pool starvation when the application holds a Postgres connection while waiting on an LLM call. The pattern is straightforward. A request needs context from the database, the application opens a transaction, fetches the rows, builds a prompt, calls the model, gets a 4-second response, writes the result back, commits. The connection is held for the entire round trip. A pool sized for 200ms transactions exhausts at one-twentieth the request rate it was sized for, and the failure surfaces as too many connections errors on requests that have nothing to do with the AI feature. There is no named-company postmortem in the public record for this one either. The pattern is recognizable to anyone who has run a database behind a synchronous LLM call. The fix is structural. Do the LLM call outside the transaction. Release the connection before the model call begins and acquire a new one after. Or move to a connection pooler that explicitly supports this pattern. None of those is free, and none of them is what the first version of the feature ships with.

What actually moves the bill

Three levers do most of the work, and each one carries a trade-off the AI-feature team would rather not own.

Halfvec is the cheapest move. The pgvector halfvec type stores each component as a 16-bit float instead of 32-bit, halving storage at no measured recall cost on most embedding models. AWS’s Aurora benchmark shows the Cohere 10M corpus dropping from 38 GB to 19 GB, with database memory consumption going from 15.12% to 7.55% on the same r7g.12xlarge instance. Neon’s July 2024 post on halfvec reports 50% storage reduction, 23% faster index build, 50% faster prewarming, and equivalent recall on a 1M DBpedia 1536-dimensional corpus. The trade-off is that halfvec only buys 2x. The corpus growth that earned the bill in the first place still applies. Halfvec moves the bill, it does not change its slope.

Binary quantization with reranking is the next lever, and it is the one with a published tension worth understanding. Qdrant’s binary quantization article from September 2023 reports recall of 0.985 on text-embedding-3-small at 3x oversampling with reranking, and 0.997 on text-embedding-3-large at the same setting. Storage drops by roughly 32x relative to full precision. Neon ran a binary-quantization test on 1536-dimensional vectors in 2024 and concluded recall was “insufficient for production use”. Both are correct. Qdrant tested with a rerank stage that re-evaluates the top-k binary candidates against full-precision vectors held elsewhere; Neon tested binary alone. Binary quantization without rerank is dangerous. Binary quantization with rerank requires keeping a full-precision copy of the vectors somewhere accessible, which is back to a storage problem of a different shape.

Separating the vector workload onto its own physical Postgres replica or onto a dedicated vector store addresses cache pollution directly. The trade-off is operational. A second system to back up, a second consistency model to reason about, a second incident-response runbook. On a small team this can dominate the cost it was meant to save. On a larger team where the AI feature has its own owners and the OLTP database has its own owners, the separation aligns infrastructure boundaries with team boundaries and is usually worth it on those grounds alone, before any cache argument is made.

A scalar quantization note worth keeping in view: Jonathan Katz’s scalar and binary quantization benchmark from April 2024 measured halfvec recall at 0.968 versus full-precision 0.968 on dbpedia-openai-1000k-angular at the same ef_search. The author’s verdict was direct: “Scalar quantization from 4-byte to 2-byte floats looks like a clear winner.” On most embedding models, halfvec is the move you can make today without touching application code or rerank pipelines, and the bill drops by half.

When the math runs the other way

This article is overkill on three configurations. Small corpora, under roughly 100,000 vectors at 1536-dim or smaller, do not generate enough storage or index volume to matter on any modern Postgres instance. Low-QPS internal tools where the vector search runs a few times a minute do not pollute the buffer cache enough to regress OLTP, and do not need the operational complexity of a separate vector store. Teams already on a dedicated vector store from day one (Pinecone, Weaviate, Qdrant Cloud, pgvectorscale on a separate instance) have paid the operational price up front and have a different cost structure that this article does not speak to. The four cost categories above all assume a vector workload colocated with an OLTP Postgres at production scale, which is the configuration most teams ship first because it is the configuration that requires the fewest decisions.

The bigger picture

The shape of the problem recurs across every AI-rollout postmortem worth reading. The feature ships fast because the existing infrastructure is already there. The cost lands later because the existing infrastructure was sized for a different workload. Embeddings on the OLTP Postgres are cheap to add and expensive to operate. The capacity plan that signed off on the AI feature did not have line items for storage at 6 KB per row, for HNSW builds that consume entire instances for hours, for memory cliffs that do not degrade gracefully, or for cache pollution that regresses unrelated p95s. The standard “what does this feature cost” template was written for application features that read and write rows the database was designed to read and write. Vector search is a different access pattern. The team that surfaces these four numbers before the feature ships pays them on a normal capacity ticket. The team that doesn’t, pays them anyway, six weeks later, in the form of an emergency one.