!=, IN, and NOT IN filters and behave inconsistently across JOIN, GROUP BY, DISTINCT, and aggregate functions. The rules are consistent if you know them, and a source of silently wrong results when you don’t.There’s a category of SQL bug that shows up in almost every mature codebase. Someone writes a filter like WHERE status != 'closed', expecting it to return every row that isn’t closed. Instead it returns fewer rows than the raw table contains. The rows where status is NULL silently dropped out. No error. No warning. The query is doing exactly what the SQL standard says it should, and the result is still wrong for what the author meant.

NULL handling is the single most common source of silently wrong query results in relational databases. The behavior is consistent if you know the rules, but the rules don’t match the intuition most programming languages build. In Java or Python, null != "closed" is true. In SQL, it’s UNKNOWN, and UNKNOWN rows get filtered out. That one difference produces most of the bugs.

NULL is not a value

Every introduction to SQL NULL starts here because it has to. NULL is the absence of a value, a marker that says “this column has no data.” It’s not zero, not empty string, not false. It doesn’t equal itself. It doesn’t not-equal itself either. Any comparison involving NULL returns a third logical state: UNKNOWN.

This is called three-valued logic (3VL), and SQL uses it consistently throughout the language. The three values are TRUE, FALSE, and UNKNOWN. Most operators propagate UNKNOWN: any arithmetic, string, or comparison operation with a NULL operand returns NULL (or UNKNOWN, in a boolean context).

| |

The CONCAT difference is a good example of how engines diverge even within well-defined territory. MySQL’s CONCAT propagates NULL: any NULL argument makes the whole result NULL. PostgreSQL’s CONCAT function does the opposite, silently skipping NULL arguments and returning the concatenation of the non-NULL parts. (PostgreSQL’s || operator still propagates NULL, matching ANSI.) Two queries that look identical can return different results on different engines, and the difference only shows up when a NULL appears.

For NULL-skipping concatenation that behaves the same on both engines, use CONCAT_WS (concat with separator). Both MySQL and PostgreSQL skip NULL arguments with it:

| |

One MySQL-specific gotcha: if the separator itself is NULL, the whole result is NULL. The separator is the one argument CONCAT_WS still propagates NULL from. As long as the separator is a literal string, the function is a reliable NULL-safe concat across engines.

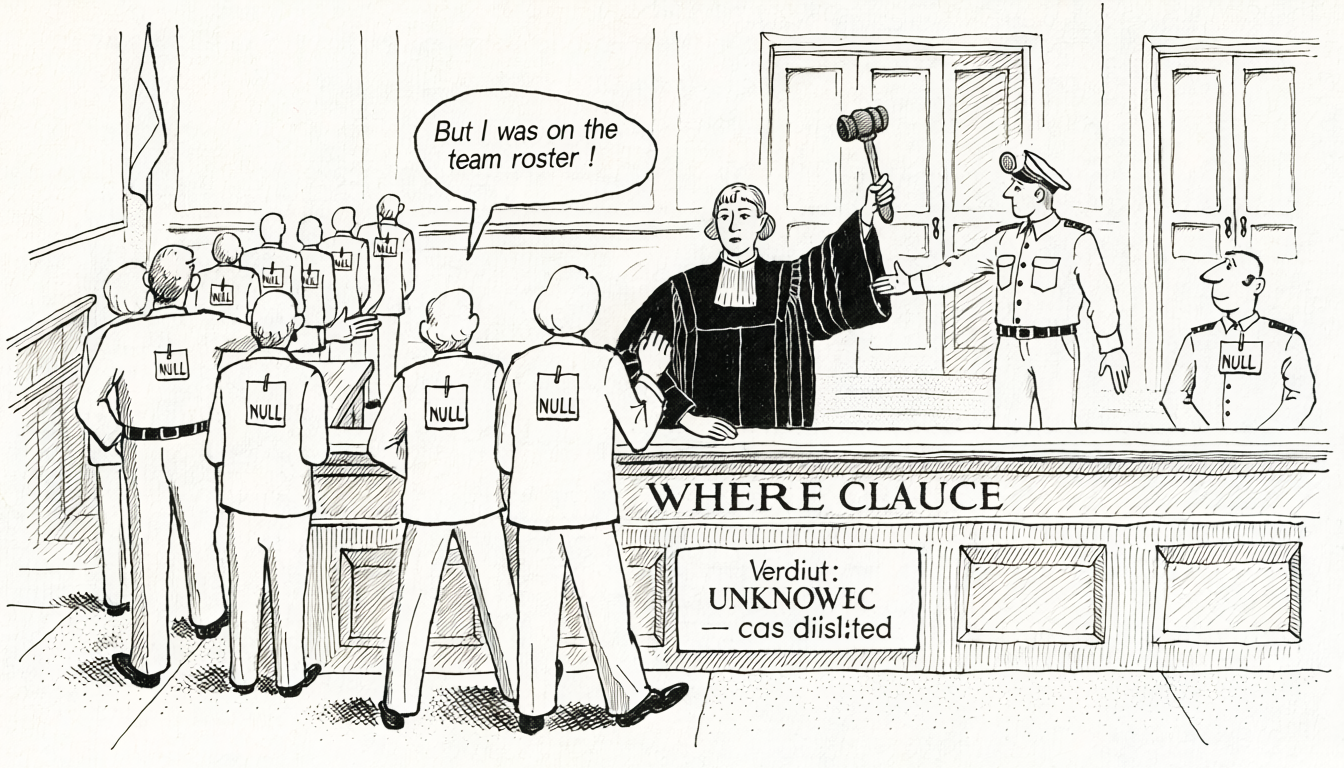

IS NULL or IS NOT NULL. WHERE col = NULL always returns zero rows, because col = NULL evaluates to NULL, which is not TRUE, so the row is filtered out. This is one of those mistakes every SQL engineer makes exactly once.WHERE clauses filter out UNKNOWN

The rule that drives most NULL bugs: WHERE only keeps rows where the condition evaluates to TRUE. UNKNOWN rows are filtered out, same as FALSE rows.

| |

If team_id is NULL for unassigned users (a completely normal state) those rows are silently dropped. The expression NULL != 3 evaluates to UNKNOWN, and UNKNOWN is not TRUE, so the row doesn’t survive the filter.

The mental model most developers carry from application code (“anything that isn’t team 3 is included”) is wrong in SQL. To get that behavior, you have to spell it out:

| |

This is one of the most common sources of “the numbers don’t match” bugs. A report that’s supposed to count “everyone outside the sales team” quietly excludes every unassigned user, and the total looks plausible because unassigned users aren’t visible in the team-level breakdown either. The discrepancy only surfaces when someone reconciles against a direct row count.

NOT IN is a trap

NOT IN with a nullable subquery is the classic silent-failure NULL bug. The trap is specifically that the subquery has to return a column that can contain NULL: rules out primary keys but extremely common for foreign keys, self-references, and any column that’s optional by design.

| |

The subquery returns every manager_id in the table, including NULL for users who don’t have a manager (the CEO, top-level roles, anyone unassigned). The moment the subquery contains a single NULL, the outer query returns zero rows.

The reason is how NOT IN expands. x NOT IN (a, b, c) is equivalent to x != a AND x != b AND x != c. If any of a, b, c is NULL, that comparison returns UNKNOWN, and AND with UNKNOWN can only ever be FALSE or UNKNOWN. The row never passes the filter.

Safer alternatives:

| |

NOT EXISTS is the better habit. It’s correct regardless of NULL presence, and the query planner handles it at least as well as NOT IN on any modern engine. Treating NOT IN as “suspicious until proven NULL-free” saves a category of bug that’s almost impossible to catch in review.

COUNT and NULL: skipped, not zero

The single most important thing to know about aggregates and NULL: NULL is not treated as zero. It’s skipped entirely. Nothing about NULL gets coerced or counted; it’s as if the row weren’t there for the purposes of the aggregate.

COUNT makes this visible because it has three forms that behave differently:

COUNT(*)counts rows, regardless of their contents. NULLs in the row don’t matter.COUNT(col)counts non-NULL values ofcol. A row wherecol IS NULLis skipped.COUNT(DISTINCT col)counts distinct non-NULL values. NULL is not treated as a distinct value; it’s excluded.

| |

On a table of 1,000 users where 200 have NULL emails:

COUNT(*)returns 1000 (all rows)COUNT(email)returns 800 (NULLs skipped)COUNT(DISTINCT email)returns ≤ 800 (distinct non-NULL emails only)

This shows up in reports all the time. “How many users signed up this month?” gets answered with COUNT(signup_source) and comes up short because the column was added later and older rows have NULL. The row is there. COUNT(*) would see it. COUNT(signup_source) doesn’t.

The rule: use COUNT(*) when you want rows, COUNT(col) when you specifically want “rows with that column populated.”

SUM, AVG, MIN, MAX: also skip NULL

The same rule holds for every aggregate. NULL is not contributed to the sum, not counted in the denominator for the average, not considered for min or max.

| |

If half the rows have NULL rating:

SUM(rating)is the sum of the non-NULL half. NULLs don’t contribute 0; they contribute nothing.AVG(rating)is the sum of the non-NULL half divided by the count of non-NULL rows, not the total row count.

The AVG behavior is the most common source of surprise. If 10,000 rows have rating = 5 and 10,000 have rating = NULL, AVG(rating) is 5.0, not 2.5. The NULL rows don’t pull the average down toward zero. They’re not in the denominator at all.

If you want NULL-as-zero behavior, you have to opt in:

| |

SUM(col) over a set where every value is NULL returns NULL, not 0. A SUM that feeds into arithmetic downstream (total + tax, for example) can propagate NULL through the rest of the expression, often somewhere the query author wasn’t expecting. COALESCE(SUM(col), 0) is the idiomatic fix; make the fallback explicit at the aggregate.The framing that keeps this straight: NULL is not a value, so aggregates have nothing to aggregate. Absent, not zero. If you want absent to mean zero, that’s a COALESCE decision the query author makes; the engine won’t make it for you.

GROUP BY and DISTINCT treat NULLs as equal

Here’s where the rules get inconsistent in a way that genuinely surprises people: GROUP BY and DISTINCT treat all NULLs as the same group, even though NULL = NULL returns UNKNOWN.

| |

This is a deliberate exception carved out by the SQL standard. GROUP BY and DISTINCT use a “NULL-safe” equality for grouping purposes, because the alternative (one group per NULL row) would be useless. But it means the behavior is internally inconsistent: WHERE a = b says NULLs aren’t equal, GROUP BY a says they are.

The practical implication: COUNT(DISTINCT col) excludes NULL entirely (consistent with COUNT(col)), while GROUP BY col produces a single row for all NULLs. Two different “null-handling” behaviors under the same umbrella of “treats NULLs as equal for grouping.” Queries that rely on either for correctness should be written with the awareness that the two operations don’t agree.

NULL-safe comparison operators

Both MySQL and PostgreSQL offer operators that treat NULL as equal to NULL, mirroring the GROUP BY behavior for regular comparisons.

| |

These are useful when joining or filtering on columns that may contain NULL on both sides and you want NULLs to match:

| |

Neither is used often in practice. The habit most teams settle on is “don’t let NULL be meaningful in join columns”: either constrain the columns NOT NULL or filter NULLs out before joining. The operators are there for the cases where those aren’t options.

ORDER BY: NULL placement varies by engine

When sorting, NULL has to go somewhere. The SQL standard leaves the default placement implementation-defined, and engines disagree.

- PostgreSQL. NULLs sort last for

ASCand first forDESCby default. - MySQL. NULLs sort first for

ASCand last forDESCby default. - Oracle and SQL Server. Match PostgreSQL’s behavior (NULLs last for

ASC).

The fix is to be explicit:

| |

NULLS FIRST / NULLS LAST is ANSI standard and supported by PostgreSQL, Oracle, and SQL Server. MySQL doesn’t support the NULLS FIRST/LAST syntax directly; you fake it with a computed column:

| |

Teams that run the same reports against different engines (especially during a migration or in a polyglot analytics stack) hit this one hard. A top-10 leaderboard quietly reorders when the ORDER BY engine changes underneath it.

JOINs don’t match on NULL

A standard equi-join a.col = b.col doesn’t match rows where either side is NULL. This is consistent with the three-valued logic rule: NULL = NULL is UNKNOWN, so the join predicate fails.

| |

If the intent is “every user, with manager info if present,” use a LEFT JOIN. If the intent is “users where manager_id matches some manager row,” the INNER JOIN is correct but it’s worth naming the exclusion: users with NULL manager_id are gone, on purpose.

For joins that should treat NULLs as matching (both sides have NULL, and that means “same”), use the null-safe operator:

| |

This is rare but legitimate (e.g., matching optional identifiers where “both unspecified” should be treated as a match). Most of the time, the correct answer is to make the column NOT NULL and use a sentinel if needed (and then deal with the sentinel’s own problems, covered below).

Foreign keys are nullable by default

A foreign key column is nullable unless declared NOT NULL. A nullable FK means the reference is optional: users may or may not have a manager, orders may or may not be linked to a promotion. This is often the correct intent, but it’s frequently unintentional.

| |

Review migration files with this in mind. A column that should always be populated but was added as nullable will accept NULLs forever. Retrofitting NOT NULL later requires backfilling or cleaning up existing NULL rows: easy when the table is small, painful at scale. (Foreign Keys Are Not Optional covers the broader picture of FK enforcement and why application-level validation is an incomplete substitute.)

What NULL actually means is context-dependent

The SQL rules for NULL are unambiguous. What NULL means in a given column is not. NULL can mean:

- Unknown. The data exists but we don’t have it. A user’s birthdate where the user declined to share.

- Not applicable. The field doesn’t make sense for this row.

spouse_nameon a row for a single person. - Ongoing or not yet set. The state isn’t finalized.

end_dateon an active subscription. - Data entry error. The column should have been populated but wasn’t.

- Legacy. The column was added after the row was created and never backfilled.

The same column may mean different things in different rows, and the schema doesn’t tell you which is which. This is where schema comments earn their keep, documenting the semantics of NULL in each column in the DDL itself rather than in a wiki page nobody finds.

Sentinel values: the alternative, and its own problems

A common workaround: use a sentinel value instead of NULL. end_date = '9999-12-31' for “ongoing.” status = -1 for “unknown.” deleted_at = '1970-01-01' for “not deleted.”

Sentinels avoid the three-valued-logic rules at the cost of introducing their own bugs. A few to watch for:

- Aggregates include sentinels.

AVG(rating)over a column where “unknown” is stored as-1skews the average toward negative. Sentinels break the “aggregates skip missing values” assumption that NULL provides for free. - Range queries break in unexpected directions.

WHERE end_date > NOW()returns all the sentinel rows along with real future dates. Every filter has to explicitly exclude the sentinel. - Indexes skew. A column where 80% of the values are the sentinel has a low-selectivity index. The planner may skip the index entirely on queries that filter out the sentinel, because it doesn’t know that’s the intent.

- Downstream consumers have to know. Every system that reads the data has to treat

9999-12-31specially. Miss one consumer and wrong data shows up in a report.

The trade-off is real. NULL forces every query author to think about three-valued logic. Sentinels let queries use normal equality but require every author to know the sentinel. Neither is free; they move the cost around.

The pragmatic middle ground: use NULL for genuinely absent data (ongoing subscriptions, optional fields), use sentinels sparingly and document them, and declare NOT NULL everywhere you can enforce presence. A column that’s NOT NULL is the one case where the rules don’t matter, because NULL can’t get in.

Diagnosing a NULL bug

When a query returns fewer (or more, or none) of the rows it should, the fastest way to narrow it down to a NULL issue:

| |

If no_team is non-zero and the filter is team_id != X or team_id IN (...), the NULL rows are the likely culprit. Rewriting with explicit NULL handling (team_id != X OR team_id IS NULL, or NOT EXISTS, or COALESCE(team_id, -1) != X) will reveal whether NULLs were being silently excluded.

For NOT IN, inspect the subquery:

| |

If the answer is non-zero, NOT IN is returning an empty set regardless of the outer query’s data.

The mental model

NULL handling is consistent once you internalize the rule set, and the rule set is smaller than it looks:

- Any comparison involving NULL returns UNKNOWN.

WHEREfilters out UNKNOWN rows. - Aggregates skip NULLs.

COUNT(*)doesn’t.COUNT(col)does. GROUP BY,DISTINCT, andORDER BYtreat all NULLs as equivalent (with engine-specific sort placement).NOT INwith a nullable subquery returns empty. UseNOT EXISTS.- Join predicates don’t match NULLs unless you use

IS NOT DISTINCT FROM.

Past that, most NULL bugs are prevented by one habit: declare NOT NULL wherever the column should actually be populated. Every NOT NULL column is a column where none of these rules matter, because there’s nothing for them to misbehave on. The fewer nullable columns the schema has, the less of this there is to think about.

The columns where NULL genuinely carries meaning (optional references, ongoing states, data that may not exist) are the ones worth documenting. A schema comment that says “NULL means the subscription is still active” pulls the NULL semantics into the DDL itself, where it’s visible to every engineer, every tool, and every query author who wasn’t around when the decision was made.