There’s a period early in a project where an ORM feels like pure upside. You define a model, the framework generates a migration, and User.where(email: …) returns typed objects. No SQL to write, no mapping layer to maintain, no integration boilerplate. Five years later the same project has four migration directories, a model class with thirty custom methods overriding the ORM defaults, team memory of which relations are lazy-loaded and which aren’t, and a quarterly discussion about whether it’s time to upgrade Rails 4 to Rails 7 or skip straight to something else entirely.

Somewhere between those two points, the ORM stopped being an abstraction and became a coupling: a bidirectional contract between schema and code that both sides have to honor for every change. The contract shapes more than how changes propagate. It also shapes the schema itself, because an ORM’s default output is a database structured like the class graph rather than one designed for the workload. Short-lived prototypes and simple CRUD apps still benefit from ORMs. The defensible use cases are narrower than the industry’s default deployment pattern suggests, and the coupling is real, durable, and consistently underestimated at the point a team decides to adopt one.

SELECT.The rest of this post offers one answer for why that’s the default: the coupling an ORM introduces hides its cost long enough that the trade looks very different in year one than it does in year five.

What the ORM is actually doing

The word “ORM” suggests abstraction, “object-relational mapping” as if the mapping is the hidden plumbing. The practical reality is the opposite: the mapping is the product. An ORM takes your schema shape and projects it onto code shape. Columns become fields. Tables become classes. Foreign keys become methods. Indexes are invisible until you care about them. Constraints are whatever the ORM’s DSL exposes and nothing more.

That projection is useful. It lets application code avoid SQL, most of the time. It also means the code and schema are now two views of the same data model, and those views are expected to stay in sync by you, by your migration framework, by your tests, and by every developer who touches either side.

Stay in sync, in practice, means every schema change is also a code change. Every code change that adds a field triggers a schema change. Every migration is a coordinated edit across multiple files. The coupling isn’t an implementation detail; it’s the defining characteristic of the tool.

Source of truth: pick one, know which

Every ORM ecosystem has a default answer to “where does the schema canonically live”, and most teams never think about it.

- Model-first. Rails and Django generate migrations from changes to model classes. The model is the source of truth; the schema follows. Running

rails db:schema:dumpproduces aschema.rbthat describes the current state, and the migration files are the history of how it got there. - Schema-first. sqlc and jOOQ read SQL DDL files and generate typed client code. The schema is the source of truth; the code follows.

- Hybrid / unclear. Hibernate can do either, depending on configuration. SQLAlchemy lets you declare models in Python and generate migrations via Alembic, or point Alembic at an existing schema and generate models. Teams that don’t decide end up doing both.

The hybrid case is where the real damage happens. Over years, a team that migrates from model-first to schema-first (or vice versa) without a clean cutover ends up with a schema that neither the models nor the migration history correctly describes. Rows backfilled by a DBA with direct SQL don’t show up in the ORM’s understanding of the world. Columns added by a production hotfix get rediscovered six months later when someone regenerates models from the database.

The fix isn’t to prefer one approach over the other. It’s to decide, document, and enforce, the way you would any other convention.

Migrations stop being “DB work”

In a raw-SQL codebase, a schema migration is a single file: CREATE TABLE, ALTER TABLE, DROP COLUMN. The migration is the change.

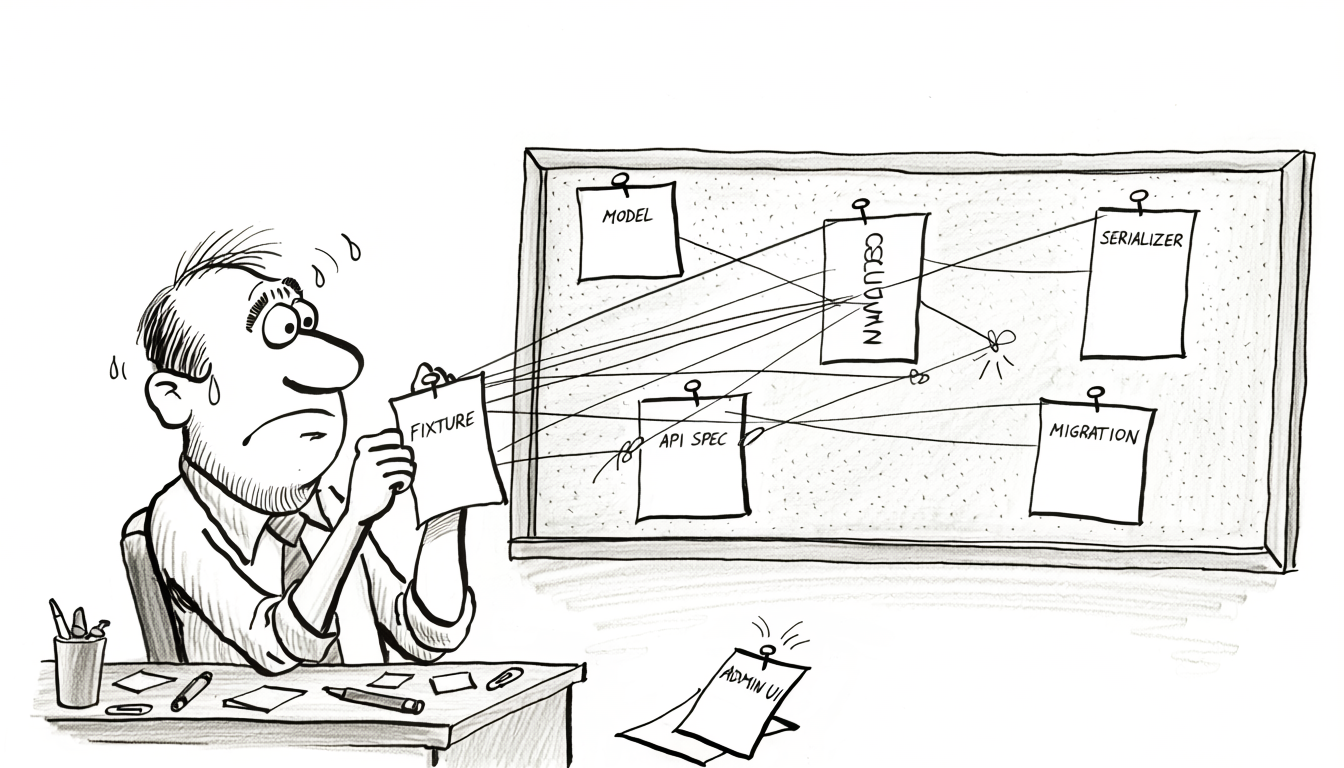

In an ORM codebase, a single logical schema change is typically:

- A migration file (

add_email_to_users.rb). - The model class (

User#emailgetter, validation,serializecalls). - The serializer (

UserSerializer#email). - The API contract (OpenAPI spec, GraphQL schema, whatever the team uses).

- Fixtures and factories (FactoryBot, factory_boy, test data).

- Query helpers that need to know the new column.

- Type stubs or generated types (TypeScript declarations, Python stubs).

- Admin UI config, sometimes.

What should be a single metadata-level change is now a coordinated edit across five to eight files, and missing any one of them produces a subtly broken application. The ORM didn’t create the complexity; it distributed it. The schema change is still one change. It just has to be propagated to every place the code has a mirror of the schema.

At small scale this is fine. The friction compounds once the team is big enough that the people writing the migration aren’t the same people owning the serializers and the API consumers. A schema change now requires coordinating across teams, each with their own view of the data model, each needing their files updated. The schema itself didn’t get harder to change. The ORM layer around it did.

Hidden queries

The ORM generates SQL you didn’t write. That’s the value proposition. It’s also a persistent failure mode.

- Lazy loading.

user.orderstriggers a query.user.orders.first.line_itemstriggers another. In a loop over 100 users, that’s at least 101 queries, none of them visible in the code. The classic N+1. - Implicit joins.

.includes(:orders)eager-loads associations, but only if someone remembers to write it. The default is lazy. Defaults win. - Magic methods.

where(status: :active).first_or_create(email: …)is three or four queries depending on the code path, and the code says nothing about it. - Generated sort and filter.

User.order(:created_at).limit(10)on a table without an index oncreated_atdoes a full table scan. The query was generated by the ORM; the reviewer never saw it.

None of these are the ORM doing something wrong. They’re the ORM doing exactly what it said it would. The cost is that the SQL the database actually runs isn’t in version control, isn’t code-reviewed, and isn’t profiled until it shows up in slow-query logs. Every ORM codebase accumulates query shapes nobody intentionally wrote.

pg_stat_statements, EXPLAIN on every slow path) just to know what’s actually running.Two query languages, neither complete

Past the CRUD ceiling, every ORM codebase ends up with raw SQL living alongside ORM calls. Window functions, recursive CTEs, PostgreSQL DISTINCT ON, LATERAL joins, MySQL INSERT ... ON DUPLICATE KEY UPDATE with complex update clauses, exclusion constraints, full-text search, spatial queries: the list of things awkward or impossible to express through the ORM grows over the life of the project.

The result is a codebase with two query languages coexisting. Reviewers have to know both. Type safety is uneven; ORM calls produce typed objects, raw SQL produces hashes or arrays that need manual mapping. The two styles drift. The ORM-side queries follow the ORM’s conventions; the raw-SQL queries follow whatever the author happened to write that day.

The honest consequence: past a certain complexity threshold, the ORM isn’t reducing the SQL surface area, it’s adding a second layer on top of it. The SQL didn’t go away. It got pushed into the half of the codebase that’s harder to trace.

Bidirectional coupling

The part that surprises teams is how hard it is to leave.

Migrating a database schema (renaming a column, changing a type, splitting a table) is mechanical. It’s a migration file and a deploy window. The mechanics are well-understood and the blast radius is bounded.

Migrating off an ORM is not mechanical. The ORM’s conventions have bled into:

- Controller and API code. JSON shapes match model attributes.

as_json,serializable_hash, and ORM callbacks define what the outside world sees. - Test suites. Fixtures, factories, and in-memory SQLite test databases depend on the ORM being there.

- Third-party integrations. Export formats, webhooks, analytics pipelines, all built against the ORM’s JSON representation of the data.

- Admin UIs. Rails Admin, Django Admin, Laravel Nova; hard-wired to specific ORM conventions.

- Query helpers. Every scope, every association, every callback is ORM-native.

- Team knowledge. Every engineer who’s been there more than a year thinks in the ORM’s abstractions.

None of this is the database’s problem. It’s the surrounding code that grew up expecting the ORM to be there. Replacing the ORM means replacing or rewriting every one of those layers. A schema migration is a weekend project; an ORM migration is a yearlong initiative.

The asymmetry is worth naming. The coupling is bidirectional, and one direction (schema → code) is much harder to undo than the other. Teams that adopt an ORM for velocity rarely account for the exit cost.

Database-side logic doesn’t round-trip

Most ORMs have a tunnel-vision view of the schema: they see what they created. They don’t see:

CHECKconstraints. The ORM has no concept of them. A constraint likeCHECK (amount >= 0)is invisible to the model; the ORM’s validations become the only gatekeeper the application knows about.- Triggers. A trigger that mutates a row after insert produces data the ORM didn’t know would be there. Reading back the row often requires an explicit reload.

- Generated columns. MySQL’s

GENERATED ALWAYS AS (…) STOREDand PostgreSQL’s equivalent produce values the ORM treats as regular columns, but they can’t be written to, and the ORM’s default behavior is to try. - Partial and expression indexes. The ORM sees the column, not the index. A query that should hit a partial index on

WHERE deleted_at IS NULLgets generated without that predicate and misses the index. - Exclusion constraints. PostgreSQL

EXCLUDE USING gist (…). Completely outside the ORM’s worldview.

The ORM’s view of the schema is a subset of the real schema. Queries written against that subset can violate invariants the database enforces. The application code thinks the write succeeded; the INSERT comes back with a constraint violation; the code has no idea why. Teams paper over this with application-level validation that duplicates the database’s, and then the two drift, which is its own class of production incident.

Relational modeling isn’t object modeling

The coupling goes one direction that’s easy to see: schema changes require code changes. It also goes the other direction, which is harder to see. The ORM’s object model is what shapes the schema in the first place. For simple data, a User with an email and a password hash, that’s fine. For non-trivial domains, the shape inherited from object modeling produces schemas that look like class hierarchies and perform like poorly-designed databases.

This mismatch has a name: the object-relational impedance mismatch. Its practical consequence is that ORM-driven schemas get shaped by class hierarchies rather than by the relationships and access patterns the workload actually has.

Normalization doesn’t look like inheritance. A properly normalized schema is structured by the shape of the relationships between entities, not by a class graph. Consider a scheduling application with three kinds of entries: appointments, days off, and product launches. All of them are events. They have a start time, an owner, a status. Each has different additional fields.

The relational answer is a supertype/subtype pattern (sometimes called class table inheritance): a base events table with the shared fields, and specialized tables for each subtype, each with event_id as a primary key that’s also a foreign key back to events:

| |

Each subtype has its own columns, indexes, and constraints. Each can evolve independently. A new field on appointments doesn’t touch events, days_off, or launches. Dropping the launches feature drops one table and a CHECK-constraint value. Queries that only care about one subtype hit a narrow, well-indexed table instead of scanning across fifty columns of mostly-null data.

The ORM-driven shape tends to produce something different. Rails’ single-table inheritance (STI) collapses everything into one wide table with a type column and every possible subtype field nullable. Django’s multi-table inheritance is closer to the relational answer but introduces implicit joins the developer didn’t ask for. Hibernate offers all three strategies (SINGLE_TABLE, JOINED, TABLE_PER_CLASS) but most teams pick SINGLE_TABLE because it’s the default and the fastest for small-scale CRUD.

STI-style tables start showing their cost around the 10-million-row mark. Every query now scans a table with dozens of nullable columns. Indexes have to include the type column to be useful. Adding a field to one subtype means adding a nullable column visible to every other subtype. The schema looks like a class hierarchy and performs like one table doing the job of four.

Complex relationships don’t fit class graphs. Many-to-many bridges with their own columns, polymorphic references (one column that points to different tables depending on a sibling column’s value), temporal tables, recursive self-references; once the data model has these, the object graph starts fraying. The ORM’s answer is usually a custom association that looks natural in code and generates SQL nobody would write by hand.

Normalization decisions are driven by access patterns, not classes. A well-designed schema decides what to normalize and what to denormalize based on read/write ratios, query patterns, and storage trade-offs. The ORM-first approach tends to normalize by class structure, which is mostly correlated with good access-pattern normalization at small scale and mostly uncorrelated with it at scale.

The coupling here isn’t only code to schema. It’s class-graph to schema-shape, and that second form is the one that dictates how the database performs under real traffic.

When scale exposes the modeling

The class-shaped schema is cheap at small scale. Its cost is hidden until the workload grows, and because the schema shape is coupled to the class graph the application assumes, fixing it isn’t a schema migration. It’s an application restructure. The ORM’s opinions about data modeling are fine at 1,000 rows. Tolerable at 1 million. Breaking at 10 million. At 100 million, the patterns that were quietly suboptimal become the production incidents of the quarter.

- Wide STI tables that scanned fine for 100k rows become the reason a query times out at 100M, because the planner can’t pick an efficient path through dozens of columns of mostly-null data with mixed cardinalities.

- Lazy-loaded associations that were 200ms at small scale are now 60-second requests fanning out to a thousand queries.

find_or_create_byraces that never mattered when two users hit the same endpoint now cause daily deadlocks on hot rows.- Unindexed ORM-generated sorts that worked at 10k rows become sequential scans over hundreds of gigabytes.

- Connection-pool exhaustion from ORMs that hold connections across application logic becomes a top-of-funnel incident when traffic grows.

At this point, teams reach for tools that weren’t supposed to be in the solution space for an OLTP application. Materialized views are the common one. They’re legitimately useful for analytical workloads, wrong for write-heavy OLTP because they have to be refreshed, and refresh windows during traffic either stall the primary or serve stale reads. Read replicas with application-level routing get bolted on not because the read workload demands it, but because the primary is buckling under queries that would have been cheap on a better-designed schema. Caching layers get introduced to paper over query shapes the ORM insists on generating. Each of these has legitimate uses. None of them is a fix for a schema that wasn’t designed for the access pattern it’s getting.

The pattern: ORM-driven schemas work until they don’t, and when they don’t, the options are rewrite the schema (hard, because the ORM’s conventions are everywhere) or add infrastructure that papers over the problem (expensive, and eventually stops working too). The schema that was designed to be ergonomic for the ORM at 1,000 rows is now the binding constraint on what the application can do at 100M.

The thinner alternatives

There’s a spectrum between “hand-roll every query with database/sql” and “full ORM with identity map, lazy loading, and 200-line models.” Several tools occupy the middle ground by treating SQL as the source of truth and generating typed code from it, without the mapping layer.

- sqlc. Go, Kotlin, Python, TypeScript. You write SQL queries in

.sqlfiles; sqlc generates type-safe client code. The schema is canonical, the queries are code-reviewed SQL, and there’s no runtime layer to reason about. Migrations stay plain DDL. - jOOQ. JVM. Reads your schema and produces a fluent, type-safe DSL for building queries. Feels like SQL, reads like SQL, with compile-time type checking. Schema-first, no model mapping.

- Kysely. TypeScript. Typed query builder with no ORM layer. You describe the schema in types; Kysely ensures queries match. The full SQL surface area is reachable.

- Drizzle. TypeScript. Despite the name, closer to a typed query builder than a classical ORM. Schema declared in code, queries written in a SQL-like DSL, no identity map.

- Plain

database/sqlorpgxwith a small query helper. Go in particular has a tradition of “raw SQL plus a thin wrapper.” More boilerplate, minimal coupling.

The common thread across these tools: schema is the source of truth, queries are code-reviewed first-class artifacts, and there’s no mapping layer pretending the database doesn’t exist. The payoff is predictability; the SQL you see is the SQL that runs. The cost is some of the magic: no User.find(1).orders.where(total: 100..).first_or_create one-liners.

For long-lived OLTP systems with non-trivial query shapes, that predictability is worth more than the magic. For short-lived CRUD apps, it isn’t.

When ORMs still earn their place

ORMs have a place. It’s narrower than the industry’s default deployment suggests. The workloads where the velocity payoff consistently outweighs the coupling cost share two properties: they’re bounded in scope and they’re bounded in lifespan.

- Short-lived prototypes and experiments. Projects that will be rewritten, replaced, or discarded within a year. Model-first iteration is genuinely faster when the schema is fluid, and the coupling cost doesn’t compound if the project doesn’t live long enough to hit it.

- CRUD-heavy internal tools and admin UIs. Query shapes are uniform and simple, the workload won’t scale past the ORM’s comfort zone, and the system doesn’t outlive the product it supports. The ORM’s constraints function as a style guide rather than as a limit on what the application can do.

That’s the list. Not “projects where the team knows Rails.” Not “workloads with uniform query shape, for now.” Not “small teams.” Those framings start as short-lived exceptions and end up as the default, and once the project outlives its original scope the coupling cost compounds silently until it’s too expensive to remove.

The failure mode isn’t picking an ORM for a prototype. It’s keeping it ten years later, after the prototype has become the company’s main production system, after the workload has grown past its original shape, and after migrating off costs more than a rewrite of the application. Most of the ORM codebases engineers end up cursing started in one of the two bullets above and were never reconsidered when they outgrew them.

Trade-offs

Everything in this post has a counter-argument, and the counter-arguments are real.

- ORMs save real time on simple queries.

User.find(1)is shorter thanSELECT * FROM users WHERE id = 1. Across a codebase it adds up. - Type safety in the application layer. Rails and ActiveRecord don’t give compile-time types, but Django’s model fields, SQLAlchemy’s typed columns, and Hibernate’s entity types do. Raw SQL’s answer is schema-first code generation (sqlc, jOOQ), which works but requires tooling.

- Domain modeling. Some teams legitimately want their data model to have methods, validations, and behavior co-located with the data. An ORM gives that for free; a query builder doesn’t.

- Team familiarity. A team that knows Rails deeply will out-ship a team learning sqlc for the same project. The right answer depends on the team, not the abstract merits.

- The middle ground isn’t free. Typed query builders require maintained type definitions. Schema-first code generation adds a build step. “No ORM” means a different abstraction, maintained by you.

The choice isn’t ideological. It’s a trade between two failure modes: the ORM’s coupling cost versus the query-builder’s boilerplate and maintenance cost. For short-lived systems, the ORM wins. For long-lived systems, the thinner layer wins. The catch is that most systems surviving their first year are long-lived, and most teams underestimate how long their system will live. If the project is still running three years from now, you’re probably in the second category whether or not you planned to be.

The bigger picture

The thing an ORM sells is a mapping between code and schema. The thing it delivers is a coupling. For short-lived projects (the prototype, the internal CRUD tool, the bounded experiment) the trade is worth it; the coupling cost is deferred, and by the time it would catch up the project has served its purpose or been replaced.

For projects that live long enough and grow complex enough (which is almost any project that survives its first year) the coupling becomes the dominant cost. Every major framework upgrade is a migration of its own. Every scale inflection requires working around the ORM’s opinions. Every query past the CRUD ceiling is raw SQL anyway. The better default for an application the team expects to still be running in three years is schema-first: keep the DDL canonical, keep queries as first-class code-reviewed artifacts, use a thin typed layer (sqlc, jOOQ, Kysely, Drizzle) to bridge to the application, and leave the ORM in the toolbox for cases that genuinely match its narrow strengths.

If you’re starting a project expected to live more than a year, default to schema-first. Inside an existing ORM codebase where the signals are showing up (raw-SQL ratio creeping up, migrations that require cross-team coordination, queries the ORM can’t express, performance paths that bypass it anyway) the useful question isn’t whether to migrate off. It’s where to draw the schema-first boundary for new work. Usually at new subsystems, not legacy code. Grandfather what’s there, pick up sqlc or jOOQ or Kysely for new code, and let the boundary move over years.