A new engineer adds a table to the analytics schema and runs into a build break: the CI lint rule complains about a missing soft-delete column. She checks ten other analytics tables. Eight have deleted_at. Two have is_deleted. One ignores soft-delete because its rows are immutable. She asks in #data-eng which convention applies and gets back “depends on the table, ask the original author.” Two of the original authors have left. She adds deleted_at TIMESTAMP NULL to match the majority, ships the PR, and the dashboard that aggregates across all eleven tables starts double-counting the rows where is_deleted = 1 overlaps with the new deleted_at IS NULL.

The convention the lint rule was meant to enforce never actually existed. The deleted_at pattern landed in 2017; is_deleted in 2019 from an engineer who preferred the explicit boolean; the no-soft-delete table in 2021 from a third engineer who argued (correctly, for that table) that soft delete didn’t fit the use case. Each decision was right in isolation. None of them got written down anywhere a tool could read. The lint rule enforces the column name. It cannot tell that the column name is meaningless when three patterns coexist for the same operation.

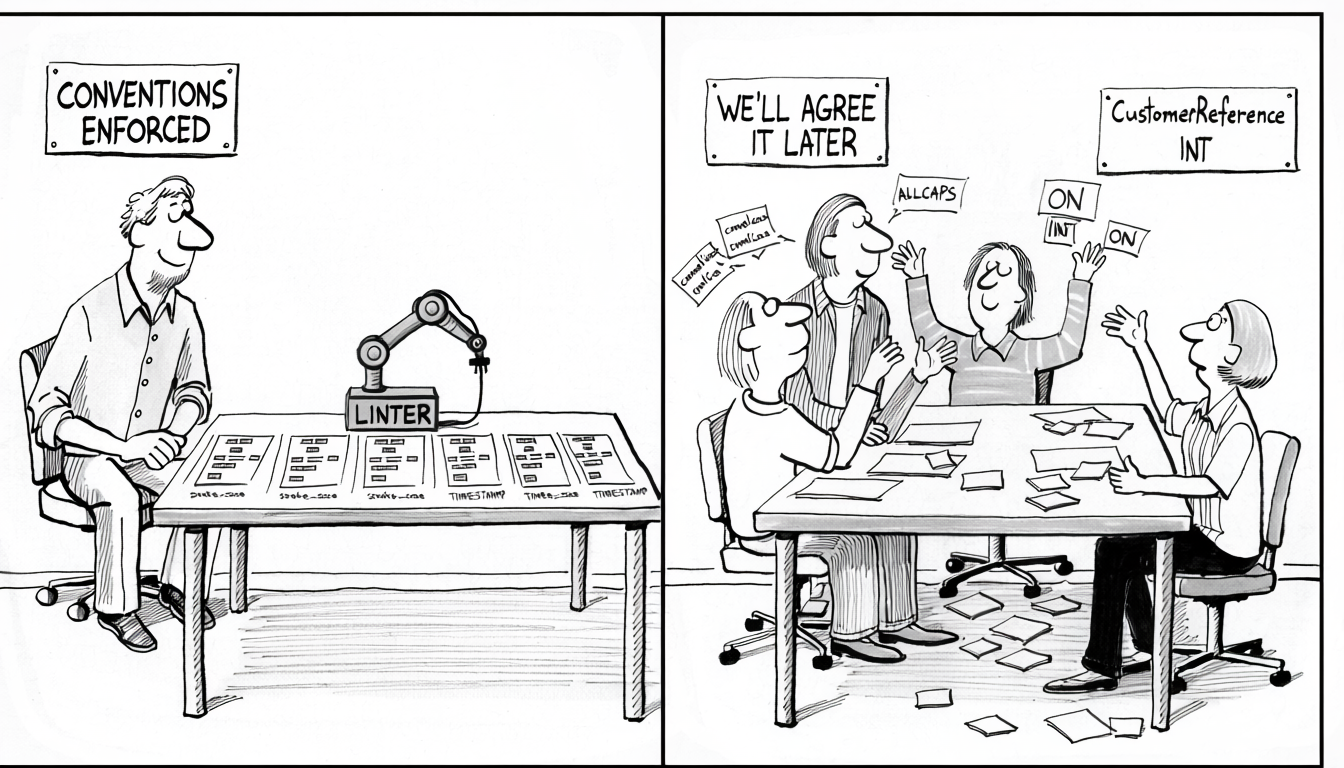

The corollary is the thesis of this post. Conventions only survive when a tool is enforcing them, and they only matter when the tool checks what they actually mean, not just what they’re called. Pick the ones a linter, ORM, or migration runner can check fully (column name, behavior, and consistency against the surrounding schema), enforce them in CI, and skip the ones the tool can only validate by name. Those drift the same way the team’s memory does.

What “conventions” means here

Conventions in this post means the decisions that apply across every table, not the design of any particular table:

- Naming.

snake_case,camelCase, orALLCAPSfor tables and columns. - Table names. Singular (

user) or plural (users). - Primary keys. Bare

idor<table>_id. BIGINT, UUID, or composite. - Foreign keys.

user_idreferencingusers.id, or ad-hoc names likeownerandcreator. - Mandatory columns.

created_at,updated_at,deleted_at,created_by. Which tables need them and which don’t. - Status and enum patterns. INT with documented values, CHECK constraint, or native ENUM. Zero-indexed or one-indexed.

- Boolean naming.

is_active,has_completed,can_edit, or bareactive/completed. - Timestamp types.

TIMESTAMP,DATETIME,TIMESTAMPTZ. Timezone-aware or naive. - Character sets and collations.

utf8mb4vslatin1;en_US.UTF-8vsC.

None of these have one right answer. All of them have consequences that multiply across the lifetime of the schema.

Humans benefit, but not durably

Consistent schemas are easier for humans. Onboarding is faster, review is mechanical, queries are predictable. These benefits are real. They’re also entirely dependent on something other than memory holding the convention in place.

A new engineer spends less time building a mental model when PKs, FKs, and timestamps are named the same way everywhere. True, and the convention enabling it exists only as long as someone is actively keeping it enforced.

A migration adding CustomerReference INT in a codebase where everything else is customer_id BIGINT gets flagged when conventions are consistent. True, and whether it actually gets flagged depends on whether the reviewer remembers the rule or a linter is enforcing it.

JOIN users ON orders.user_id = users.id works without a lookup when the convention is <table>_id. True, and the query is right only because every prior migration followed the rule, which is only the case if something kept them on track.

The pattern: every human benefit is downstream of enforcement. A rule that exists only because the current team agreed to it lasts exactly as long as that team does. People change jobs, preferences evolve, new hires bring their own instincts. Within a few quarters of turnover, a human-only convention is gone, and so is the benefit.

The reasons worth picking a convention are the reasons a machine can enforce it.

Why it matters for automation

Automation is the only thing that holds a convention over time. A linter fails the build when snake_case becomes camelCase and keeps failing until someone addresses it; a team agreement doesn’t. The tools below are both the enforcement mechanisms and, by that logic, the only reasons a convention is worth picking in the first place. If none of them apply to your stack, the convention probably isn’t worth the debate.

Every tool that touches the schema reads conventions implicitly. When conventions are consistent, the tool works without configuration. When they’re not, someone has to tell the tool how to handle each exception. Usually in a config file nobody maintains.

ORMs rely on naming rules. ActiveRecord assumes a table named users has a primary key id and that a user_id column is the foreign key. Deviate and you write explicit mappings. Every non-standard table adds a line of configuration; every belongs_to :author, foreign_key: :creator_ref is convention drift showing up as code. Other ORMs are more explicit but still benefit from predictable column names: autogeneration works, inference works, magic methods work.

Code generators produce better output. sqlc, Prisma, jOOQ, and similar tools read schema metadata and emit type-safe client code. Consistent naming means the generated output looks like hand-written code. Inconsistent naming produces getCustomerReferenceByUserId() sitting next to getOrderByUserId(), same concept, different shape, every caller has to remember the difference.

Migration tools depend on mandatory columns. Frameworks that manage created_at / updated_at automatically assume every table has them. Tables that omit these columns silently break the assumption: inserts work, updates work, but the “last modified” display in an admin UI shows null for some tables and not others.

Deployment pipelines assume a consistent migration shape. Migration runners that execute schema changes as part of CI/CD (Flyway, Liquibase, Alembic, Atlas, skeema) rely on migration files following a predictable naming and ordering convention, up/down scripts that mirror each other, and tables that don’t need per-case special-handling. Zero-downtime patterns like expand-and-contract assume updated_at exists for cache invalidation, that new columns are nullable or have defaults so old and new application versions can both write the table, and that soft-delete markers are consistent so rolling deploys across mixed versions don’t resurrect rows one version thought were gone. Every convention that drifts turns a deploy playbook into a per-table checklist, and the checklists are what get skipped under time pressure.

Schema diffing and drift detection depend on consistent shape. Tools like Atlas and skeema compare the desired schema (in version control) to the actual state of each environment and generate the migration to reconcile them. They work well when naming, types, and mandatory columns are uniform, and produce noisy diffs, false positives, and hand-maintained exception lists when they aren’t. Environment parity between dev, staging, and prod degrades the same way: the drift the team never notices becomes the one that breaks a deploy at the worst time.

Schema linters only work if there’s a rule to check. SQLFluff, sqruff, and similar tools can enforce naming conventions, require certain columns on new tables, reject forbidden types, and flag style issues. But the lint rule has to match the team’s convention. No convention, no rule. No rule, no enforcement.

Documentation generators like tbls and SchemaSpy produce browsable schema docs straight from the catalog. Consistent conventions make the generated output navigable. Inconsistent ones make it look like a dump.

Schema-reading LLMs and RAG pipelines have joined the same list. Copilot, MCP-backed agents, text-to-SQL tools, and retrieval-augmented coding systems pull column names and types from information_schema and pattern-match them against natural-language questions. When one table uses createdAt, another uses created_date, and a third uses date_created, the model either generalizes from the most-frequent variant and gets the other two wrong, or hedges and produces verbose conditional SQL. Uniform naming lets the model carry an assumption across tables without re-checking the catalog for every column; the accuracy gains from clean conventions stack on top of the 27% lift studies attribute to column comments alone. Conventions that were about making humans and codegen tools agree turn out to matter just as much for the machine-reading layer.

The common thread: tools treat conventions as a contract. When the contract holds, tools work. When it doesn’t, tools either break or force the team to maintain exceptions forever.

created_at must be a TIMESTAMPTZ or that FKs must be named <table>_id; the tooling silently starts expecting it. The moment a table violates the expectation, every tool built on it starts producing surprises. Conventions are a contract whether or not anyone acknowledges them, and the tools are the ones keeping score.The menu: pick what automation expects

Each decision below matters only if something in your stack cares about it. The notes below lean on what tools typically expect. Pick the option that matches your automation. If nothing in your stack cares either way, skip the decision; it won’t survive the next round of team change regardless of which side “won” the debate.

Naming: snake vs camel

snake_case is the idiomatic choice for PostgreSQL and MySQL. Unquoted identifiers in PostgreSQL are case-folded to lowercase, so created_at and createdAt both become createdat unless one is quoted, which means mixed-case names force every query to quote the column. camelCase works if the team is disciplined about quoting, but most teams aren’t. Pick snake_case unless there’s a specific reason not to.

Table names: singular or plural

Both work. Rails and Django default to plural (users). CREATE TABLE user will actually fail in PostgreSQL because user is a reserved word, which is an argument for plural. Singular reads cleaner in joins (user.id feels like “the user’s id”). This is the smallest decision on the list in terms of consequences. The real requirement is that whatever you pick, you use it everywhere.

Primary keys: id vs <table>_id

Bare id is shorter and matches the default of most ORMs. It also creates a subtle hazard: table_a.id = table_b.id is syntactically valid SQL that silently returns wrong results. <table>_id (so user_id on the users table) makes cross-table joins impossible to write accidentally, because the identifier tells you which table the ID belongs to.

The trade-off is that ORM defaults expect id, so using <table>_id means configuring every model. For teams that rely heavily on an ORM’s conventions, staying with id is pragmatic. For teams with more ad-hoc SQL, <table>_id pays off.

Foreign key naming

user_id referencing users.id is the convention most tools expect. Ad-hoc names like owner, creator, assigned_to, ref_id are sometimes necessary (multiple FKs to the same table need different names) but should be explicit about what they reference, either in the column name (owner_user_id) or in a schema comment. A column named owner with no comment and no FK is a question nobody can answer from the schema alone.

Mandatory columns

Decide which columns every table must have. Common choices:

created_at TIMESTAMPTZ NOT NULL DEFAULT NOW(). Row creation time.updated_at TIMESTAMPTZ NOT NULL DEFAULT NOW(). Last modification, driven by a trigger or application logic.created_by/updated_by. Audit fields, if the team needs them.deleted_at TIMESTAMPTZ. Soft-delete marker.

deleted_at and 20% don’t, every query has to remember which tables to filter and which not to. The queries that forget silently return soft-deleted rows from some tables and not others. Pick a rule (“every table has created_at, updated_at; soft-delete tables have deleted_at”) and apply it uniformly.Status and enum patterns

Three common strategies, each with trade-offs:

- INT with documented values.

status TINYINT NOT NULL COMMENT '1=active, 2=paused, 3=cancelled'. Compact, fast, relies on comments for semantics. Works across engines. - CHECK constraint.

status VARCHAR(20) CHECK (status IN ('active', 'paused', 'cancelled')). Self-documenting in the DDL, slightly larger storage, human-readable in query results. - Native ENUM. PostgreSQL has first-class ENUM types, MySQL has

ENUM(...). Compact and typed, but changing the set requires a schema migration; in PostgreSQL, removing a value is genuinely hard.

Any of these is fine. Mixing them (one table uses INT, another uses CHECK, a third uses ENUM) is what creates the problem. Every query that aggregates across tables has to handle three value formats.

Boolean prefixes

is_active, has_completed, can_edit make filter expressions self-documenting: WHERE is_active AND NOT is_deleted. Bare names like active or completed create ambiguity in review. Is this column a flag or a timestamp? Adjective or verb? Prefixing eliminates the ambiguity at no runtime cost.

Timestamp types

The choice matters more than the name. TIMESTAMP in MySQL auto-converts between UTC and the session timezone, which is usually not what you want. DATETIME stores the literal value with no timezone awareness. PostgreSQL’s TIMESTAMPTZ stores UTC with automatic conversion on input and output, the most forgiving option for most applications.

Mixing types across related tables is where silent timezone bugs come from. A created_at TIMESTAMPTZ on one table joined to a DATETIME on another will either implicit-cast or mismatch, depending on engine and version. Pick one per engine and apply it everywhere.

Character sets and collations

utf8mb4 in MySQL, UTF-8 in PostgreSQL. Anything else in 2026 is a legacy holdover. The subtle hazard: mixing charsets across columns causes joins between text columns to fail silently or return wrong results. PostgreSQL is stricter about this; MySQL is more permissive and more dangerous because of it.

Conventions beyond the schema

Schema conventions usually stop at the DDL, but the automation layer around the database depends on naming decisions that live outside it: secrets, endpoints, users, roles, hostnames, backup files, environment variables. Those names show up in Terraform modules, Vault paths, Kubernetes resources, IAM policies, service-discovery records, monitoring dashboards, and every deploy pipeline. When they’re consistent, the infrastructure is self-describing and IaC modules stay generic. When they aren’t, every piece of automation grows a special case.

Common places this shows up:

- Secret names.

prod/db/orders/primary/passwordvsprod-orders-db-pwvsorders_prod_password. A clear prefix/suffix pattern lets secret rotation scripts, IAM scopes (arn:aws:secretsmanager:*:*:secret:prod/db/*), and environment-promotion automation use wildcards instead of hardcoded lists. - Hostnames and endpoints.

db-orders-rw.internalanddb-orders-ro.internalfor reader/writer splits,db-orders-primary-0.us-east-1for cluster node addressing. Consistent patterns mean DR runbooks, connection pools, and failover scripts can resolve endpoints by transforming a base name rather than reading from config. - Database users and roles.

app_orders_rw,app_orders_ro,migration_bot,readonly_analytics. The role name should say what it can do. Teams without a convention end up withsvc_user_42,rails,monitoring, and nobody can audit privileges without a spreadsheet. - Database names.

orders_prodvsprod_ordersvsorders-production. Consistent environment placement (always suffix or always prefix) means wildcard grants, backup pattern matching, and cross-environment queries stay simple. - Environment variables.

DB_ORDERS_HOST,DB_ORDERS_USER,DB_ORDERS_PASSWORD_SECRET. A per-service naming convention lets config loaders and IaC modules generate the full variable set from a single identifier. - Backup and snapshot names.

orders-prod-20260420-0000vsbackup_orders_20260420. Retention jobs, restore runbooks, and compliance audits all read these names by pattern.

These aren’t schema conventions in the strict sense; they’re operational conventions that happen to be tied to the schema. They follow the same rules: pick a pattern, apply it everywhere, document it where the infrastructure code lives, and enforce it in the IaC linter (tflint, checkov) or the Kubernetes admission controller so new resources can’t be named off-pattern.

The failure mode is the same as inside the schema. A team with three secret-naming patterns needs a custom script per resource. A team with three hostname patterns runs DR runbooks twice as long as they should be. Operational conventions have the same compounding cost as schema conventions, in a different layer; the tooling to enforce them is different (IaC linters instead of SQLFluff), but the discipline is identical.

Enforcement: conventions without enforcement decay

Written conventions that nobody enforces last until the next person who didn’t read the doc. The only conventions that hold over years are the ones CI checks.

Schema linters

SQLFluff is the most popular for PostgreSQL and MySQL. It runs on migration files in CI and can enforce:

- Naming rules (

snake_caseonly, specific prefixes/suffixes). - Required columns on

CREATE TABLE(every table must havecreated_at). - Forbidden types (reject

TIMESTAMPin favor ofTIMESTAMPTZ). - Style (trailing commas, keyword casing, indentation).

The alternative is a custom linter, a script that parses migration files and checks them against a ruleset. More work to build but more flexible if the rules are unusual. Teams with strong opinions often end up here.

CI checks on the schema itself

Beyond linting migration files, a CI job can introspect the database after migrations are applied and assert properties of the final schema:

| |

If the result is non-empty, fail the build. This catches the migration that adds a new table without the mandatory columns, the case a file-level linter can miss if the CREATE TABLE was split across migrations.

Other useful assertions:

| |

Introspection-based checks run against the shape of the schema after migrations are applied; they catch drift the file-level linter can’t see.

Pre-commit hooks

Developer-machine enforcement: running sqlfluff on staged migration files before commit. Faster feedback than CI, but only works if every developer has the hook installed. Treat pre-commit hooks as a developer experience improvement, not as the real gate. CI is the gate.

CODEOWNERS on migration directories

Putting a small group of owners on migrations/ forces review by someone who understands the conventions. This is a human check, not a mechanical one, but it catches things the linter can’t (“this new table has all the right columns but the design is wrong”). The owner doesn’t have to be one person; a rotating review responsibility works.

Review templates

A PR template that includes a checklist for schema changes (“does this follow the naming convention? does it include mandatory columns? are the types consistent with existing tables?”) nudges the author to check before review. The cost is zero; the benefit is that most issues get caught before they reach a reviewer.

Scope: strict for new, lenient for legacy

The enforcement question that derails most teams: do existing tables have to meet the convention? Trying to retrofit decades of legacy is an impossible project; requiring only new tables to meet the convention is achievable. The practical pattern:

- New tables. Linter is strict. No exceptions without a documented reason.

- Existing tables. Grandfathered. Linter skips them or only checks newly-added columns.

- Legacy migrations. An explicit backlog, prioritized by frequency of use and onboarding pain.

This splits the problem into “hold the line on new work” and “improve legacy opportunistically.” Both are manageable. Trying to do both at once isn’t.

The hardest part: changing conventions without creating a new one

Conventions decay not because they were bad, but because they changed faster than the team could propagate the change. The result isn’t “the new convention”. It’s a schema with three coexisting conventions, none of which applies everywhere.

The discipline is straightforward, even if it’s not always followed.

Write the convention down

Before enforcement, before any migration, there has to be a single authoritative document: a SCHEMA-CONVENTIONS.md in the repo, or a runbook, or an RFC. Not a Slack thread, not tribal knowledge. Something a new engineer can read and apply.

The doc is short by design: a page or two, not a book. It answers “what naming convention do we use?” and “what columns does every table need?” and “which timestamp type?”. It doesn’t try to teach relational design. Short docs get read; long ones don’t.

Use a lightweight RFC process for changes

When someone wants to change a convention (switch from id to <table>_id, add updated_by as a mandatory column, move from INT to UUID primary keys) it goes through a written proposal:

- What’s changing and why.

- Impact on existing tables (migrate all, grandfather, or cutover by date).

- Impact on tools, ORMs, dashboards, and downstream consumers.

- Who decides (single decision-maker or review board).

- Explicit cutover date if changing for new work only.

The RFC doesn’t have to be heavyweight. A paragraph in a shared doc, reviewed by two or three people, approved by a named owner. The value isn’t the document. It’s the forcing function that prevents conventions from changing by PR comment.

Decide: migrate, grandfather, or both

Three options, each with a different risk profile:

- Migrate everything. Rename columns across the schema, update every query, every ORM model, every dashboard. This is the clean option and almost never the practical one. Retroactive renaming breaks downstream consumers the team may not even know exist: analytics jobs, exports, integration partners, cached query plans.

- Grandfather legacy, enforce on new. Old tables stay as-is; new tables follow the new rule. The schema ends up with two conventions coexisting, but it’s predictable: “tables before this date use X, tables after use Y.”

- Cutover with a migration window. Pick a date, migrate the highest-traffic or highest-visibility tables before the date, grandfather the rest, close out the long tail opportunistically.

The grandfather option is the most common in practice because it respects the reality that the schema is a shared resource nobody fully owns. Write the decision down (“before 2025-Q3, tables used camelCase; after, snake_case”) so future engineers know the split exists and isn’t a bug.

The two-generation rule

This is a heuristic, not a hard rule, but it’s a useful test. When a proposed change would create a third convention without a plan to eliminate one of the existing two, the change probably isn’t worth it.

When to accept legacy drift

Not every legacy convention is worth fixing. The calculation:

- How often does the old convention cause bugs? Column names nobody can remember, types that force implicit casts, missing mandatory columns that break tooling. Real costs, worth migrating.

- How often is the table touched? A table used by ten queries a day is different from one used by ten thousand. Migration risk scales with usage.

- What breaks downstream? ORM models, dashboards, exports, cached plans, monitoring. Every consumer of the table name or column name has to update. If the count is unknown, it’s higher than you think.

- Is there a cheap alternative? A

VIEWthat exposes the table under the new convention, while the underlying table keeps its legacy name, can bridge the gap without a full migration.

The honest answer is often “leave it alone and document why.” A comment in the schema, or a note in the conventions doc, is cheaper than a migration and accomplishes the main goal: making the inconsistency visible and intentional.

Trade-offs

Conventions have a cost. A rule that doesn’t serve automation is noise. It takes space in the conventions doc, invites bikeshedding in review, and adds nothing to the schema’s consistency over time, because there’s nothing to keep it from decaying the moment the people who cared move on. The heuristic: if no tool fails when the rule is violated, the rule doesn’t need to exist.

Over-specifying is the second failure mode. A team with thirty linter rules will find a way around them or ignore them. Rules that block common, legitimate cases get bypassed with -- noqa comments until the linter stops being a gate.

The lightweight approach:

- A small set of rules, each one tied to a specific tool that cares (naming, mandatory columns, forbidden types).

- A larger set of advisory warnings, not blockers.

- A clear escape hatch for exceptions, with the exception documented.

- Periodic review. Rules that fire too often are wrong; rules that never fire are noise.

Strict conventions are a feature up to the point where the enforcement matches the rule count. Beyond that, they become a tax on every change. The right level is the smallest set automation will actually enforce without constant arguments.

The bigger picture

The useful question is what your automation needs, and whether a machine can enforce it. If yes, pick the convention your automation needs and wire it into CI. If no, skip the decision; debating aesthetics in the absence of enforcement produces nothing that will still be true a year from now. People change, teams turn over, preferences drift. A convention enforced by a linter doesn’t care who wrote the migration; a convention enforced by “we agreed last quarter” does.

The schemas that age well are the ones where the only surviving conventions are ones a linter, ORM, migration runner, or IaC module is actively enforcing. Everything else (bikeshed questions about singular vs. plural, religious debates about column ordering) drifts the moment the people who cared stop working there. That’s the predictable result of anchoring a rule to something as ephemeral as a team’s current preference.