A senior engineer asks an assistant to write a query returning each user’s total order value. The codebase has used soft deletes for three years; deleted_at columns are on most tables. The model produces:

| |

The query compiles. It runs. It returns numbers. It’s wrong: there’s no o.deleted_at IS NULL predicate, so soft-deleted orders inflate every total. The reviewer skims the diff, sees LEFT JOIN, sees COALESCE, sees deleted_at IS NULL somewhere in the WHERE clause, approves. The bug ships. A finance dashboard over-reports revenue by 7% for two months until a customer flags a discrepancy against their own records.

The new shape of database failure

The bugs an LLM introduces don’t look like junior-developer bugs. Junior devs ship SQL that doesn’t compile, throws an obvious type error, or returns nothing at all: the kind of failure CI catches by running the query once. The model ships SQL that compiles, runs, and returns something, just not the right something. It pattern-matched on a million open-source codebases and missed the rule that lives only in yours: the soft-delete convention this team adopted three years ago, the multi-tenancy filter every query is supposed to carry, the polysemic TINYINT whose 0 means “unknown” in one column and “free tier” in another, the denormalized counter that has to be bumped in the same transaction as the row it counts.

The obvious response is “review AI-generated code more carefully.” That doesn’t survive contact with how reviews actually happen. Engineers using AI assistance score measurably lower on comprehension quizzes about the code they shipped; they read the output less carefully than the code they would have written themselves, the syntax is right, the change is small, and the reviewer ratifies. Even if that effect didn’t exist, the reviewer who can spot the missing o.deleted_at IS NULL is the same reviewer who would have written the predicate in the first place, which is exactly the value AI was supposed to provide. “Review more carefully” asks the team to do a check the AI was supposed to obviate.

It also misses how broad the surface is. The same reasoning shape (plausible SQL that pattern-matches on training data and misses the local rule) produces migrations that lock production at scale, upserts that assume a UNIQUE that was never declared, indexes the planner won’t use, and CHECK constraints that allow the state transition the team wanted to forbid. The SQL is syntactically valid in every case; the EXPLAIN is clean; the failure only appears against real data, real concurrency, real volume, or the conventions that live in nobody’s head but the team’s.

What the experienced engineer was doing that CI doesn’t

Before the model wrote that LEFT JOIN, an engineer who had been on the team for a year would have done several things in their head:

- Recognized the soft-delete convention from the column naming pattern and added

o.deleted_at IS NULLto the join, because the team’s rule is “every query that isn’t an audit filters soft-deletes on every joined table.” - Considered whether the report should attribute orders to soft-deleted users at all (probably not for revenue; possibly yes for retention analysis) and asked the requester instead of guessing.

- Recognized

o.amountis nullable for refund rows and decided whether refunds count toward the total or not. - Checked whether the

orderstable has atenant_idcolumn the multi-tenancy filter requires, and added the predicate the codebase’s row-level security depends on. - Recalled that an earlier version of this exact report shipped six months ago with a JOIN through

order_items, doubled every total, and required a backfill, and chose the simpler aggregation deliberately.

None of these checks is in CI. Each is the kind of context the first post in the AI series describes: information the schema doesn’t carry, that lives in the engineer’s head, that the model has no way to read. That post argues for putting as much of that context back into the catalog as possible: declared FKs, column comments, named constraints, conventions. The work raises the floor; it doesn’t change what’s true here. Even with a perfectly described catalog, no schema declaration says “this team’s rule is to filter soft-deletes on every joined table” or “the previous version of this query had a JOIN cardinality bug, don’t repeat it.” Some classes of risk only exist at runtime against real data, real conventions, and real history, and the test suite is the only place those conditions can be reproduced before production.

Confidence is anti-signal

The dangerous dynamic isn’t that the model gets things wrong. It’s that it sounds most confident exactly where it’s most likely wrong. Ask for a basic SELECT with a JOIN and the output is right, no hedging. Ask for NULL semantics in an outer join, timezone arithmetic across a DST boundary, isolation-level behavior under contention, dialect-specific JSON path syntax, or a window-function frame clause, and the output reads with the same calm tone. The probability of correctness has dropped; the prose around it hasn’t.

Human reviewers anchor on tone. There’s no visual signal in the diff that says “this part lives in a region where the training data is sparse and contradictory.” A query that drops rows because of an unintended tie in ORDER BY created_at LIMIT 100 OFFSET 200 reads exactly like a query that doesn’t. A CHECK that lists valid values but doesn’t constrain transitions reads exactly like one that does. The reviewer’s internal calibration (“this part looked sketchy, I’ll dig”) is fed by uncertainty cues that the model doesn’t emit. “Review more carefully” cannot fix this, because the things worth scrutinizing don’t look worth scrutinizing.

Volume changes the math

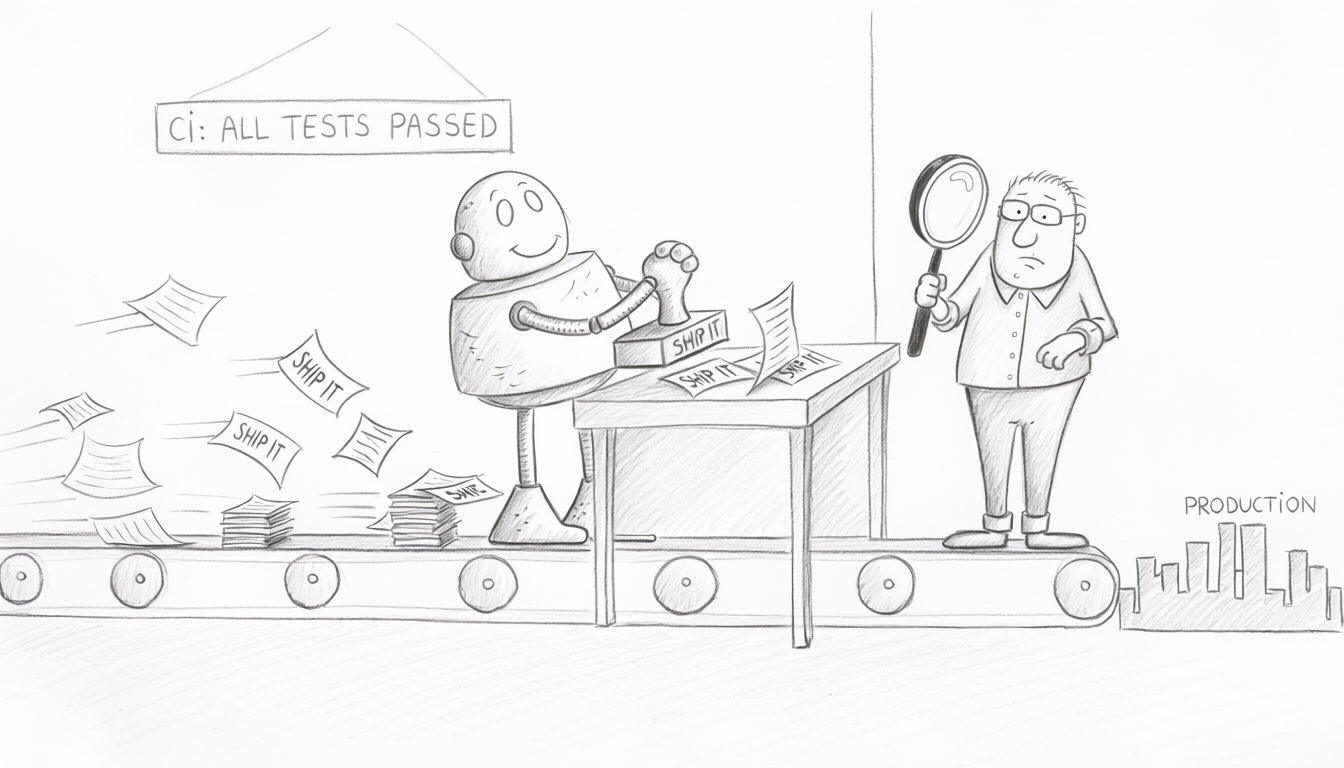

The bugs above existed before AI. What changed is the rate. A team that used to ship eight migrations a week now ships eighty, because the cost of writing one collapsed and the cost of reviewing one didn’t. The reviewer who used to give each migration ten minutes now gets one; the senior engineer who would have caught the soft-delete bug on her own change has eight more changes in queue waiting for the same scrutiny. The implicit human review didn’t disappear, it got rationed.

The acute version of this is the wrong query that ships to production. The chronic version is schema drift, and it’s worse. Naming conventions decay because nobody enforces them on every PR. Redundant indexes accumulate because the model suggested one without checking what already exists. NOT NULL constraints get dropped because the migration was failing and removing the constraint made it pass. Soft deletes get reinvented every quarter because each AI session starts fresh on the team’s conventions, and “fresh” plus “confident” plus “eighty PRs a week” is how a codebase ends up with three different ways to mark a row deleted, all in production.

None of this is catastrophic in any given week. The compounding cost shows up eighteen months later, when query plans degrade, an ORM upgrade exposes the inconsistencies, or a new engineer can’t tell which of three “current” patterns to follow. The test suite isn’t only catching the acute incident; it’s the artifact that resists the drift, because every assertion the team writes is a convention written down where the next session can’t unlearn it.

Murphy’s Law used to apply intermittently. With AI in the loop, it applies 100% of the time. Every weak corner of the test suite gets exercised on a weekly cadence, and what used to be a rare edge case becomes the load-bearing case.

The failure mode is broader than wrong queries

The soft-delete bug is one shape. The same dynamic produces every other class of database failure AI introduces:

- Migrations that lock production at scale. Asked to add a

tiercolumn tousers, the model producesALTER TABLE users ADD COLUMN tier TINYINT NOT NULL DEFAULT 0. CI’s empty-database migration test runs in 200ms and passes; in production, MySQL rewrites all 50 million rows under a metadata lock for 40 minutes during business hours. The DDL is syntactically valid; the failure is volumetric, and CI is structurally incapable of seeing it. - JOIN cardinality bugs that produce plausible numbers. An AI-generated

LEFT JOINwith a predicate placed in theWHERErather than theONclause filters out the unmatched side; a JOIN through a bridge table without compositeUNIQUEmultiplies aggregations. The result set has the right shape, the row count is plausible, the number is wrong. Catching it requires a test that asserts the result against a known dataset where the failure mode would change the count. - Upserts assuming undeclared constraints.

ON CONFLICT (email) DO UPDATEis correct only ifemailactually has aUNIQUEconstraint. Without it, PostgreSQL throws the moment the planner sees no usable arbiter index; in some MySQL configurations, the equivalentINSERT ... ON DUPLICATE KEY UPDATEsilently inserts duplicates because there’s no key to conflict on. The model writes the upsert from the column name and the question, doesn’t check the catalog for the constraint, and the test database has no concurrent writers exercising the path. - CHECK constraints that look right and aren’t. Asked to “prevent transitioning from

cancelledtoactive,” the model emitsCHECK (status IN ('pending','active','cancelled')), which lists the valid values but doesn’t constrain transitions. The constraint is syntactically right, semantically wrong, and indistinguishable from a working constraint until anUPDATEin production succeeds where it shouldn’t. - Indexes the planner will ignore. Asked to speed up

WHERE LOWER(email) = ?, the model addsCREATE INDEX ON users (email). The planner can’t use it for the function call; the query stays slow; the EXPLAIN was never inspected because the index was the visible “fix.”

Every one of these is detectable. None of them is detected by the kind of test most teams run.

What “test the database” actually means today

For most teams, “we test the database” means one of two things:

- The application’s unit tests use a real database (often SQLite or an empty Postgres) for fixtures, and they pass.

- CI runs

db:migrateagainst a fresh empty database before the test suite, and it doesn’t error.

Neither is testing the database. The first tests the application’s happy path with a database underneath. The second tests migration syntax. Both leave the entire surface above untested. The class of failure AI introduces - semantically valid SQL that misbehaves under realistic conditions - passes both checks every time.

What’s missing is a layer of tests that asserts against the database’s actual behavior on representative data: the migration finishes within a duration budget; the constraint rejects the values it claims to; the query, run against a known fixture, returns the expected count and the expected aggregate; the index in the EXPLAIN is actually used; the schema invariants the team thinks they have are present and behave the way the team thinks they do. These categories of test exist. Tools for each have existed for years. Most teams have never written them. Pre-AI, the teams that didn’t write them got away with it because the engineer writing each migration and each query was applying the checks in their head: slowly, with friction, sometimes wrong, but applying them. Post-AI, that engineer is increasingly not in the loop, and the only thing left is what’s actually written down.

Part 2 covers what to test in each category and the tools that exist for each.

When this doesn’t apply

The argument is conditional: if AI is writing any portion of your data layer, you need this. Cases where the conditional doesn’t hold:

- AI isn’t writing your migrations or queries. The team uses AI for application-level boilerplate; SQL and schema changes are still hand-written. The implicit human review is intact for the data layer, and the case for tests is the same as it was before.

- The database is small and single-writer. A 200-row admin table maintained by one service. Lock duration on

ALTERis microseconds, JOIN cardinality is unambiguous, the surface AI can hurt is small enough to manage by reading every diff. - The work is read-only and a domain expert validates each result. An analyst uses AI to draft queries and reviews each result against expectations. The AI is generating drafts, not shipping queries. The domain expert is the test, the same way the senior engineer used to be.

- The data is throwaway. Dev environments, ephemeral analytics, throwaway scripts. The cost of being wrong is low and the failures are reversible.

For everything else (production-facing systems, multi-writer schemas, data the business depends on) the conditional holds.

The bigger picture

Automation moves verification work; it doesn’t eliminate it. Pre-AI, “we don’t really test the database” worked for most teams because the engineer writing each migration and each query was the test, applying dozens of unwritten checks per change. The check was slow, expensive, and unreliable, but it existed. Post-AI, the engineer is increasingly not in the loop, and the team’s database verification is whatever the test suite explicitly asserts. The useful choice isn’t whether to verify; it’s whether to verify in CI or in production.

The picture worsens with agents in the loop. An agent that runs migrations, executes restores, or modifies schemas under its own permissions doesn’t have an implicit human review even at the moment of execution. A model that’s right 99.9% of the time, given a thousand operations a week, ships a serious incident every two weeks (and “right 99.9%” is generous). The test suite is what makes any of that safe; the cost of building it is a fraction of the cost of one production incident, and AI made the math explicit.

Part 2 covers what each layer has to assert and the frameworks (Squawk, pgTAP, Testcontainers, lock-duration probes, result-regression diffs) that already exist for each category.