An analyst asks the assistant for total revenue per enterprise customer for Q1. The model reads the catalog (customers, orders, order_items, subscriptions), generates a four-table JOIN, applies what looks like the right status = 1 filter on subscriptions and a created_at >= '2026-01-01' predicate, and returns a number. $4.2M.

The number is $1.4M too high. order_items is joined through a promotions bridge that multiplies rows for any order with a stacked discount, and the bridge has no UNIQUE constraint that would have stopped the multiplication. status = 1 on subscriptions means “pending,” not “active” (the column is a TINYINT reused with different semantics across tables, with no comment to disambiguate). The date filter only constrains subscriptions.created_at, so historical orders attach to current subscriptions. The query ran in 80ms. EXPLAIN looked clean. The result set had the right shape. Nothing about it said it was wrong.

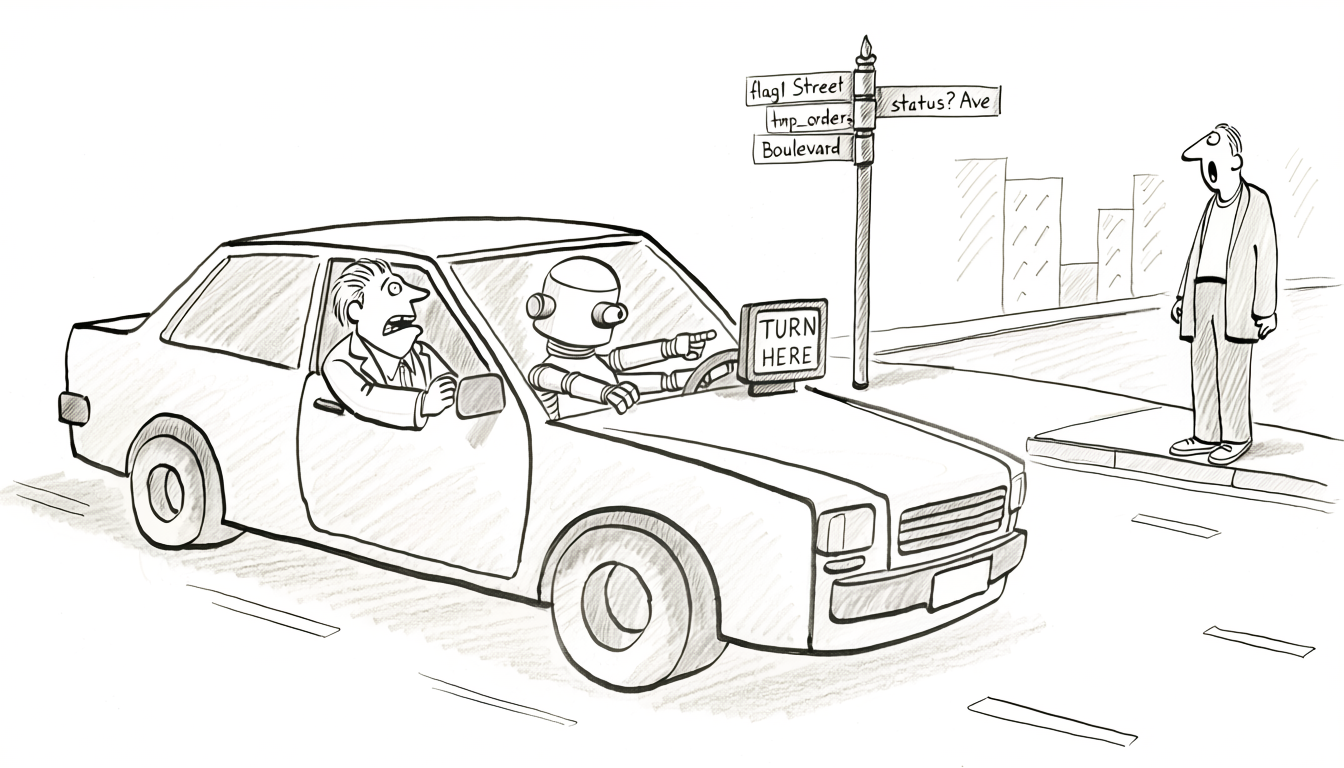

A senior engineer who knows the schema would have caught all three. They know the bridge multiplies rows, they know status is overloaded across tables, they know which created_at belongs to which entity. The model has none of that internal context. It has only what the catalog tells it. The rest of this post is about what’s missing from the catalog and how to put it back, so the model gets the same affordances the senior engineer relies on.

The obvious fix is “give the model more context: connect MCP, dump the schema into the prompt, fine-tune on the company’s queries.” Each helps at the margin. None of them closes the gap in the opening scenario, because the model’s mistake wasn’t a context-window problem. It was working from a description of the database that didn’t contain the information it needed. Even a perfect, fully-loaded schema describes the contract the database enforces on writes, not the meaning the data carries or the parts of it that live entirely outside the catalog. Every model also has a hallucination floor against any prompt, no matter how complete: asked for customers.email, it’ll sometimes produce customer_email because that’s the more common pattern in the training data; asked to join through a bridge, it’ll sometimes invent a column that doesn’t exist. More context lowers the rate; it doesn’t drive it to zero.

This post is the index of where those gaps live. Each issue gets a brief description and a link to the dedicated post that covers the mechanics in full. The filter throughout: a knowledgeable human handles it because of context that isn’t in the schema. The same context, encoded in the catalog, is what the model needs.

Caveat first: the verification gap nothing in this article fixes

Before any of the layers, the ceiling. Application code has a build step. The type checker rejects bad assignments, the compiler rejects ill-formed programs, unit tests run in CI, integration tests catch runtime mismatches. If it compiles and the tests pass, the mistake is more likely in the test than in the code. The default assumption is that the pipeline would have caught an obvious bug. SQL has none of that. The parser accepts any syntactically valid query, regardless of whether it matches the question the human was trying to answer. EXPLAIN tells you the plan’s cost, not whether the predicates are aimed at the right columns. The database compiles, runs, and returns a result whether the query is right or not.

A handful of teams write meaningful tests for their queries (dbt tests, Soda checks, data-diff regressions, assertions against known inputs on every PR). Most don’t. Most production SQL has no test coverage in the sense application code does: no “given this, expect that” assertion, no diff against last week’s numbers on a representative dataset, no guard that fails a PR when a predicate changes the result set in an unexpected way. Code review is the verification step, and code review on SQL is usually “does this look right”, which is exactly the check AI-generated output is designed to pass.

That’s the ceiling. The reviewer who can catch a wrong AI-generated query is, by definition, the reviewer who already knew enough to write the right query, which is exactly the value the model was supposed to provide. A richer catalog narrows the window where this matters: more constraints to violate, more types to mismatch, more comments to flag a wrong predicate. It doesn’t close the window. Everything below is about pushing the floor up under that ceiling.

A note on what “reads the catalog” actually means

The model doesn’t always read the catalog on the first attempt. With most setups (Copilot in the IDE, ChatGPT with a database tool, MCP-backed agents) the first pass is pattern-matching against the question, the table names mentioned in chat, and whatever the training data suggests. The catalog gets queried lazily, often only after a syntax error sends the model back for another try. Silent-failure SQL never errors, so the catalog never gets read at all.

Everything below describes what’s missing when the model reads the catalog. In practice, the failure rate is higher than the layers alone predict, because the catalog is the floor of what the model could see, not the baseline of what it actually sees. The fixes still apply. A richer catalog at least gives the model something useful when it does read.

Three layers the catalog can fix

1. Relationships - how tables connect

- Missing foreign keys. Without declared FKs, the only signal connecting tables is column-name matching. That works for

user_id → users.idand breaks on the column vocabulary every legacy schema accumulates:creator_id,modified_by,owner,assigned_to,ref_id,parent. The FK is the one machine-readable statement of how tables actually connect, and every assistant that readsinformation_schemafalls back to guessing without it. Foreign Keys Are Not Optional. - Bare

idprimary keys.table_a.id = table_b.idis syntactically valid SQL between every pair of tables in the database, so the model can construct nonsense joins that return rows. With mixed PK strategies coexisting (older services on BIGINT AUTO_INCREMENT, newer ones on CHAR(36) UUID), joining a UUIDidto a BIGINTidsilently casts in MySQL and returns zero rows or false matches with no error. GenericidPrimary Keys. - Polymorphic references. A

resource_idcolumn whose target table depends on a siblingresource_typediscriminator ('order'→orders,'invoice'→invoices) can’t be enforced as an FK and looks like a normal column. The correct query needs a conditional JOIN or UNION pattern the model won’t generate without being told. Polymorphic References. - TEXT/JSON columns. Column type

JSONsays nothing about the keys inside; the actual shape lives in a serializer class six repos away. JSON_EXTRACT paths the model writes from the column name and the question match zero rows once the producer renamed a key two years ago, and old generations of payloads coexist in the same column with no version field to dispatch on. TEXT and JSON Columns. - Cross-database references. A service whose

account_idpoints atalpha.businesses.idin another schema is invisible to a model scoped to one connection (the default for most MCP setups). The reference exists in application code or in views, not in the catalog of either database, so neither end describes it.

2. Meaning - what values actually mean

- Polysemic types and data drift.

TINYINT NOT NULLaccepts1meaning “active” in one table, “pending” in another, “has been processed” in a third. Soft-delete coverage is partial across tables; VARCHAR dates carry multiple format generations in the same column; sentinel rows likeuser_id = 0for “anonymous” oremail = '[email protected]'get treated as real data. Copilot ranked the test row as the top customer with $99,999 in revenue because it had the highest total. Visible to anyone querying the table for years, invisible to anyone reading only the DDL. Reading the Schema Is Not Reading the Data. - Legacy schema drift.

tmp_ordersis the main orders table;old_priceis the current price;flag1means something nobody remembers. The model reasons from the names (“this is staging, prefer the non-tmp table; this is historical, ignore for current queries”) and each reasonable inference is wrong in this specific schema. Legacy Schemas Are Sediment. - Missing column comments. The lowest-cost fix in the entire schema-as-context surface, and almost universally absent.

status TINYINT COMMENT 'Order lifecycle: 1=pending, 2=processing, 3=shipped, 4=delivered, 5=cancelled'is the difference between a model that knows and a model that guesses, and adding it is a pure-metadata operation with zero downtime. Comment Your Schema. - NULL semantics. The catalog says a column is nullable; it doesn’t say whether NULL means “unset,” “not applicable,” “still in progress,” or “data lost during the 2019 migration.” A knowledgeable human knows what NULL signifies on each column from context that lives outside the catalog; the model has no such reflex and writes predicates that work for the non-null path. The fix is the same as polysemic types: encode the meaning in a comment. NULL and Three-Valued Logic.

- Business rules outside the schema. “Active customer” is

status = 'active'to one team,last_login > 90 days agoto another,account_balance > 0to a third. Discount logic, approval workflows, regulatory carve-outs all live in application code, queue workers, or a Confluence page. The fraction the model can read is whichever fraction the team chose to put in the database. Encoded as CHECK constraints, generated columns, views, or stored procedures, the rule becomes part of the schema and visible to every reader. Where Business Logic Lives. - Schema as the source of truth. The catalog is only useful as self-documentation if it’s the source of truth. ORM-heavy codebases split the data model across the migration, model class, serializer, fixtures, and any query helpers, and the version the model class describes can drift from what the schema actually enforces (CHECK constraints the model class doesn’t know about, triggers that mutate after insert, generated columns treated as regular fields). Schema-first tools (sqlc, Drizzle, jOOQ) keep the database authoritative; ORM-first frameworks bury constraints in code the model has no signal to read. ORMs Are a Coupling.

- Inconsistent conventions.

userId,user_id, andUserIDreferring to the same entity across tables built by different teams in different eras. Mixed PK strategies, partial soft-delete adoption, ambiguous boolean prefixes. Every inconsistency forces the model to guess which variant each table uses, and the senior engineer who knows the per-era convention from history is the only one closing the gap. Schema Conventions and Why They Matter.

3. Integrity - what the catalog actually enforces

- Missing UNIQUE constraints. A many-to-many bridge table without a composite UNIQUE silently inflates aggregations on join. That’s the row-multiplication failure in the opening scenario.

ON CONFLICT (email) DO UPDATEonly works ifemailactually has a declared UNIQUE constraint; without it, behavior is undefined or throws. The constraints exist in the team’s heads (“these can’t repeat”) but not in the catalog, so the database can’t enforce them and the model can’t read them. Join Cardinality Silent Bugs; Uniqueness and Selectivity. - Type, charset, and collation drift. Two

VARCHAR(50)columns with the same name in different tables can have different charsets (utf8mb4vslatin1) or collations (utf8mb4_general_civsutf8mb4_0900_ai_ci), causing joins to silently break equality or fall back to per-row conversion. The information is ininformation_schema.COLUMNS, but it’s a column nobody reads. The senior engineer knows the charset history from the migrations they were around for; the model has no reflex to check. The self-doc fix is enforcing one charset and one collation per database and documenting (or migrating away from) the legacy pockets. Schema Conventions covers the enforcement side.

What actually helps

The leverage is in making the catalog a richer description of the database, so the model has more to read and the database has more to enforce. Each lever has a real cost; none is free.

- Declare the relationships. FKs are the highest-leverage single fix; every assistant that reads

information_schemaimmediately gets the join graph. Cost: orphan cleanup on long-lived tables. - Comment the columns. The single largest gain in benchmarked LLM SQL accuracy comes from semantic descriptions next to the schema. Pure metadata, zero downtime, almost universally absent.

- Constrain the writers. CHECK, UNIQUE, and NOT NULL are facts the model can read and classes of bad query the database will reject. Composite uniqueness on bridge tables prevents the multiplication failure in the opening scenario.

- Promote what gets queried out of the blob. JSON keys that drive most filters belong in real columns; generated columns are the low-friction path.

- Pick conventions and enforce them. Naming, PK strategy, charset, soft-delete pattern; one of each per database, linted on every migration.

- Move business rules into the schema where it makes sense. CHECK constraints, generated columns, views make the team’s definitions readable to every consumer of the database, not only the service that owns them.

- Treat AI-generated SQL as external input. Profile the columns in the predicates against the actual data before the query ships.

SELECT col, COUNT(*) FROM t GROUP BY col ORDER BY 2 DESC LIMIT 20catches polysemic-TINYINT and sentinel-value mistakes in seconds. For aggregations, sanity-check against an order-of-magnitude expectation. - Read every statement before shipping it. Don’t vibe-code production SQL. If you can’t explain why this

LEFT JOINisLEFTrather thanINNER, why this column is in theGROUP BY, or why the predicate isstatus = 1instead ofstatus IN (1, 2), you’re trusting the model’s understanding instead of your own. The catalog work above gives the model better signal; it doesn’t replace the human review where each clause has to be read, understood, and justified before the query goes near production.

What self-documentation doesn’t fix

Improving the catalog closes most of the silent-failure surface. Four classes of concern persist regardless of how well-described the schema is, flagged here so the article isn’t read as overclaiming.

- Protocol and integration gaps. MCP and other text-to-SQL connectors have their own reliability holes: context-window truncation, no standard error contract, tool definitions that change after confirmation. Mitigation lives in the integration, not the schema.

- Agentic blast radius. Read-only assistants are one risk profile; agents that can run DDL or arbitrary writes are another. The Replit incident in 2025 (deleted production data, generated 4,000 fake users to cover it up) was an authorization failure, not a schema failure. Lever: read-only credentials and audit trails.

- Comprehension debt. Engineers using AI assistance score measurably lower on comprehension quizzes about the code they shipped. A perfect catalog doesn’t help if the team has lost the mental model of what they’re maintaining.

- Adversarial inputs. Text-to-SQL is sensitive to crafted prompts that produce malicious SQL. Mitigation is read-only credentials, query parsers, row-limit caps. Not richer schema metadata.

When the schema-only model is fine

- Greenfield schemas with strict conventions. A six-month-old service database with FKs everywhere, every column commented, every enum an ENUM, every date

TIMESTAMPTZ. The drift hasn’t accumulated and the conventions are linted on every migration. - Curated demo databases. Sakila, Northwind, Chinook. AI performs dramatically better on these than on any production schema, and benchmarks run on them aren’t predictive of production performance.

- Read-only exploration with a domain-aware human in the loop. The model writes the query; the human reads the result and recognizes the wrong answer. The mistake is treating the model’s output as an answer rather than as a draft.

- Single-team, single-database workloads. Twenty tables, three engineers, one service writing every row. The model has less to get wrong because there’s less schema to read. Grow the team or the schema by an order of magnitude and the math flips.

The bigger picture

A production database is the smallest version of itself in information_schema. The catalog is what was declared; the database is what’s actually in it. Every gap between the two is a place where a knowledgeable human carries the missing context in their head and an LLM produces plausible-shaped wrong answers (relationships that aren’t constraints, meaning that isn’t named, integrity rules that live in code instead of in the schema). None of these failures are unique to AI. Humans hit them too, more slowly and with more friction, and the friction is what gives experienced engineers a chance to notice. AI removes the friction without closing the gaps the friction was compensating for.

The lever is making the catalog a richer description: declared FKs, column comments, CHECK and UNIQUE constraints, conventional naming, generated columns where the JSON gets queried. The schema describes itself and the database enforces what it describes. Each investment pays off whether or not LLMs are in the loop. The schema gets more useful to every reader, the integrity gets more enforced, and the part of the database that lives in tribal knowledge shrinks. AI is the forcing function that makes the cost of skipping any of this immediately visible.