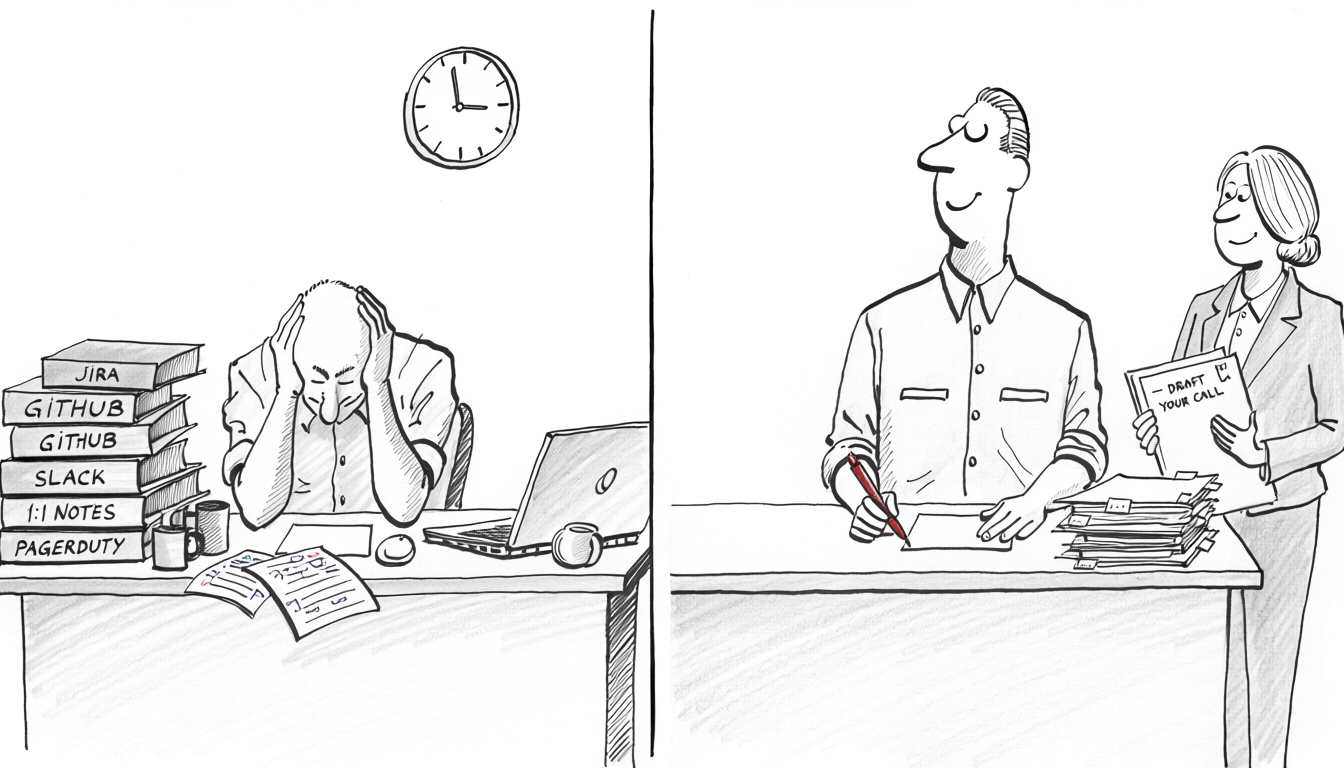

Will Larson’s writeup of a typical performance cycle at scale puts calibration at three to five hours per round per participating manager, across three rounds (sub-organization, organization, executive). Calibration is the part where managers argue ratings against each other under a budget. It is not the part where the review gets written. The writing happens earlier: pulling six months of context out of 1:1 notes, Jira, GitHub, Slack, design docs, and PagerDuty into a coherent per-report narrative, which runs one to three hours per direct report and is mostly gathering and cross-referencing rather than deciding. A manager with eight reports spends most of a working week on the full cycle, and the judgment-call portion (rating, promotion recommendation, calibration argument) is a fraction of that hour count.

The standard responses (raise headcount, adopt a better template, push self-reviews onto reports) each redistribute the gathering cost without reducing it. The architectural fix is to script the gathering and use a narrow LLM call for the synthesis. The job is bounded, the inputs are structured, the output is a draft a human will edit. The same work pattern holds up across the agent-skeptical posts on this blog (alert triage, index management, LLM-driven SQL): scripted gathering, narrow LLM synthesis, human keeps every decision. The review is a particularly clean instance because the decision is human-only by definition. No agent can fire someone. No agent can sign off on a promotion. The agent’s job is the part nobody wants to do.

The five things the agent earns its cost on

The first thing it earns: the team starts using Jira. Work the team doesn’t track is work the team can’t talk about, and the review version of that argument is sharper than the standup version. If a project isn’t in Jira, it might not be in the review, because the manager can’t hold six months of work for eight people in their head and the agent only sees what’s tracked. People notice when the first draft of their review omits the project they worked on for half the quarter. The conversation that follows is “where did that work go in the system, and how do we make sure it’s there next time?” Tracking hygiene improves automatically as a side effect of the review process, without needing its own mandate.

The second thing it earns: completeness. The thing that always slips in manual reviews is the project from month two of the quarter that everyone has stopped thinking about. The migration that finished. The incident that got handled cleanly. The cross-team contribution that left a paper trail in someone else’s repo. The release-management work the IC quietly took over when the previous owner left. These show up in the corpus the agent reads. They don’t show up in the manager’s working memory in March. Reviews written from the corpus capture them; reviews written from memory don’t, and the gap shows up cumulatively over years as the engineers whose work is visible to the manager move ahead of the ones whose work isn’t.

The third thing it earns: estimation discipline becomes a review signal. The blog’s Point After the Fact post argues for re-pointing tickets after they close, so the team accumulates real data on where time actually goes. That data is review-grade material. Not “did they hit their point targets” (a metric the team will game within a quarter of being told about it) but how complete their record is and how well their post-hoc estimates correlate with what shipped. A report who consistently re-points after the fact, even when the numbers move against them, is demonstrating a discipline the agent can surface directly. A report who doesn’t is harder to evaluate at all, which is itself information.

The fourth thing it earns: GitHub activity, loosely weighted. The agent pulls PR counts, review participation, commit cadence, and approximate complexity (lines changed, files touched, languages crossed). This is loose data and the article using it needs to be explicit about that. A staff engineer who unblocks the team on three substantive reviews a week is worth more than one who ships ten unreviewed PRs of their own. The numbers spot patterns; they do not grade. A draft that says “shipped 23 PRs and reviewed 14 from teammates, with review depth averaging 3 substantive comments per PR” is useful. A draft that grades the report on those numbers has overstepped, and the manager should ask the agent to remove the grade and re-present the data.

The fifth thing it earns: focus and follow-through patterns. Started-vs-finished ratios. Average time in-flight per ticket. Tickets opened and abandoned. Context switches per week per person. These surface patterns the manager would otherwise have to reconstruct from memory, and they’re patterns memory is bad at. Same caveat as the PR numbers. A high abandonment rate could be a focus problem or it could be an environment dragging the engineer between tickets every two days because nobody else is around to handle interruptions. The data spots the pattern. The manager makes the call about what the pattern means.

The architecture under all five is the same shape: deterministic gathering, narrow LLM synthesis, human decision. The gathering can be done two ways.

The first is MCP. Wire up off-the-shelf Jira, GitHub, Slack, and PagerDuty MCP servers and let the agent query each system directly. Lower setup cost, faster to a first draft, easier to extend when a new system gets added to the team’s stack. The agent decides what to query and how.

The second is scripts. Write Python (or any deterministic language) that hits each API and returns structured data as a CSV or JSON file before the agent ever sees it. Tickets closed per report with dates, points, and labels. PRs opened and reviewed with timestamps, approval counts, and lines changed. Time-in-flight distributions per ticket. Abandonment rates. On-call shifts and incident participation. The output is an artifact the manager can open, sort, and audit independently, and re-running the script reproduces the same numbers exactly.

The trade-off is control. MCP is faster to stand up and weaker on verification. Scripts cost more upfront and produce a deterministic audit trail. For review-grade work where the cost of a wrong cited metric is paid by a real person, scripts are usually worth the upfront cost. For the qualitative exploration the agent does later in the same workflow (pull a specific 1:1 note, read one design doc), MCP is fine because verification by re-reading the source is what catches errors anyway. A reasonable middle path is scripts for anything that becomes a quoted number and MCP for anything the agent only needs to read once.

Either way, the agent gets the structured inputs, the report’s name, their role expectations, and the review period, and produces a draft with explicit sections: shipped projects with source links, focus and follow-through patterns with the metrics inline, collaboration evidence with PR-review counts and threads referenced, contradictions surfaced rather than smoothed. Do not ask for ratings. Do not ask for recommendations. The bright line is that the agent assembles and the manager decides, and the prompt enforces it.

When the gathering uses scripts, hallucinated numbers become the easiest class of agent error to catch, because the script that produced them is a source of truth the manager can re-run in seconds. A draft claiming “23 PRs and 14 substantive reviews” is one re-run away from being confirmed or rejected. With MCP, the verification surface is weaker: the agent’s report of a query result is what the manager sees, and reproducing the exact same fetch isn’t always trivial. Either way, keep the gathering deterministic where it can be. Keep the synthesis narrow. Don’t let the agent count things you can count for it.

What it can’t be allowed to touch

Every reason above is contingent on a verification discipline the article should spend more space on than the gathering architecture deserves. Frontier LLMs corrupt a measurable fraction of delegated multi-step work, and the rate doesn’t drop with better prompts, more tools, or longer context windows (Corruption Is a Feature). A draft review the agent writes confidently, citing a specific ticket, a specific PR, a specific 1:1 quote, has a meaningful probability of being wrong in any of those specifics. The ticket exists but says something different. The 1:1 line paraphrases what was said into something close-but-not. The PR review that counts as “substantive” was a thumbs-up emoji. The migration the agent attributes to the IC was actually led by their teammate, and the IC was a reviewer.

These errors land in a document that determines whether a real person gets promoted, doesn’t, gets put on a PIP, or doesn’t. The cost of getting this wrong is paid by the report, not the manager, which makes the verification discipline an ethical obligation, not just an operational one.

Two disciplines hold the work together. The first is that every claim in the draft has to be traced to a source the manager opens and reads before the draft becomes a review. Not skimmed. Read. If the draft says “delivered the auth migration in Q1, ten weeks”, the manager opens the ticket, confirms the dates, confirms the scope. If the draft says “needs growth on cross-team collaboration”, the manager opens the threads cited as evidence and forms their own assessment. The agent’s draft is the index into the corpus, not the conclusion about it.

The second discipline is rejecting first drafts. The first generation always reads cleaner than the corpus actually is. Patterns get smoothed. Conflicting signals get harmonized into a coherent narrative that isn’t quite the truth. Re-prompt with the parts that look wrong. Ask the agent to surface contradictions it smoothed over. Ask for the strongest negative case before the draft contains any praise, and then for the strongest positive case in a separate pass. Read both. The first draft is a hypothesis. The third draft, after the manager has read the corpus and contested the obvious narrative, is closer to a review.

No decision belongs to the agent. Not the rating. Not the promotion recommendation. Not the raise. Not the PIP. Not the fire. The agent assembles. The manager decides. A draft that recommends a rating is a draft that has overstepped; reject it and re-prompt for the evidence underneath, without the conclusion.

When this doesn’t apply

The setup is overkill on small teams. A manager with three reports has the full corpus in their head and doesn’t need synthesis. The discipline pays back at six reports and up, and the curve gets sharper above ten. A new manager who doesn’t yet have judgment to verify against is in the most dangerous position, because the agent’s narrative will be the most coherent thing in the room and the temptation to trust it is highest exactly when it shouldn’t be. The agent assembles confidently regardless of how well it matches reality. Wait until you’ve written a few rounds of reviews manually before adopting this workflow. Orgs without basic systems hygiene (no Jira, scattered PR reviews, no design docs) don’t have the corpus the agent reads, and the synthesis is hollow. And teams where reviews are calibrated heavily on a single visible metric have already decided that the synthesis doesn’t matter; the agent’s draft is decoration in that environment.

The bigger picture

Scullen, Mount, and Goff (2000) found that idiosyncratic rater effects accounted for 62% and 53% of performance-rating variance across two large samples (n=2,350 and n=2,142), more than twice the variance attributable to actual ratee performance. Most of what makes a review is the manager, not the engineer. Scripts and structured corpora don’t eliminate that bias, but they pull the underlying evidence onto a surface the next reviewer in calibration can audit, which changes what gets argued about: the data or the manager’s narrative.

The agent doesn’t fix the underlying performance-management problem either. Gallup’s 2024 survey of Fortune 500 CHROs found 2% strongly agree their system inspires employees to improve, and 22% of employees agree the process is fair and transparent. Those numbers have been some version of themselves for as long as anyone has measured. The agent makes the mechanical parts mechanical, which is the most an architecture decision can do here. The hours that buys back get spent on the parts the corpus can’t help with: the conversation with the report about growth, the calibration argument with peer managers, the year-out career planning, the things that take attention and presence rather than data.