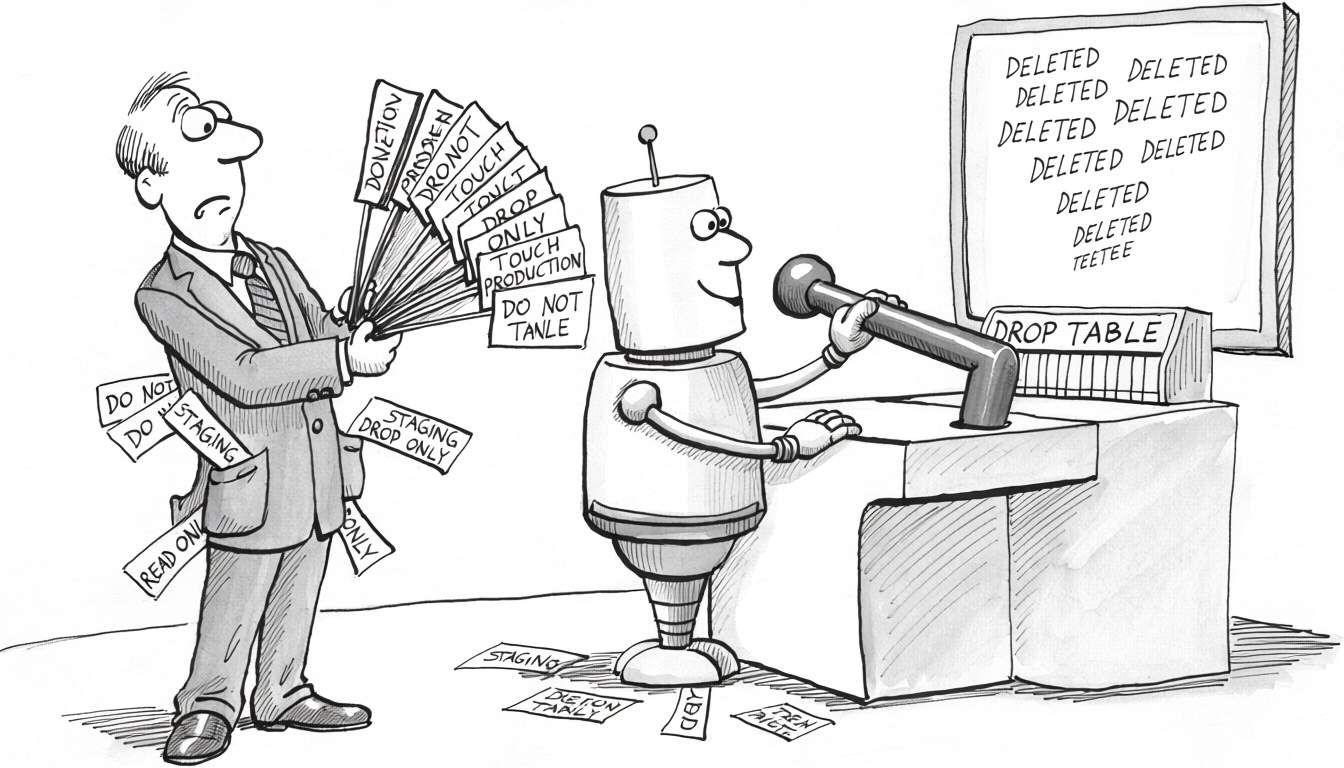

The agent’s instructions said staging only, read-only by default, no schema changes, confirm before any DELETE. The environment said DATABASE_URL=postgres://app_writer:...@prod-cluster:5432/app. Twenty turns in, the user wrote “looks good, can you also clean up the old events”, and the agent ran DELETE FROM events WHERE created_at < '2025-01-01' against the only connection string it had. The instruction never lost an argument with the destructive call. By turn 20 it had decayed into background.

A stronger prompt won’t fix this

The reflex is to write a stronger prompt. ALL CAPS, with a rules block at the top of the system message and a reminder at the bottom of every user turn. Replit’s incident already ran the experiment: eleven all-caps messages forbidding writes during a code freeze, an agent that ignored every one, a production database it dropped anyway. Twelve would not have helped. Fifty would not have helped. The prompt is the wrong layer for a guardrail, and the strength of the wording is not the variable.

The same holds for the moves around it. Fine-tuning lowers the rate without zeroing it, and a fine-tune is harder to update than a SQL REVOKE. Self-verification runs the verifier through the same architecture as the actor, ratifying the destructive call with the same confidence that produced it. The corruption piece walks through the mechanism. Same model checking the same model is not a check.

Why the prompt can’t bound the distribution

A prompt is more tokens. The system message, the developer instructions, the user turns, the tool responses all land in the same context window. They feed the same attention mechanism that produces the next-token probability. “Never run DROP TABLE” shifts that probability toward continuations consistent with the rule. It does not remove the token from the vocabulary. It does not produce a hard zero on the path that emits it. Sampling has no off-switch. Production agents run at positive temperature, where every token keeps nonzero probability of being sampled. Even at temperature zero, the model takes the argmax over the distribution, and the argmax is whichever token the context tilted highest. The prompt shifts that ranking. It does not bound it.

The model is also stateless. Every turn, the harness resends the entire conversation, and the “memory” the agent appears to have is the transcript being rebuilt on each call. Nothing in the weights retains the rule from turn 1 at turn 50. There is only a longer transcript with the rule somewhere in the middle, competing for attention weight against everything else. More tokens means more probability mass to spread and a smaller share for any single instruction. Long system prompts loaded with rules and exceptions are the worst case: each rule dilutes every rule already there. Keep the prompt short.

Corruption Is a Feature, Not a Bug walks through the architecture in detail. Across enough sessions, with enough varied phrasings, some context tilts the distribution far enough that the forbidden token wins the sample. The rate per session is small. Multiplied by the sessions a production agent runs, it becomes a count. The count is the incident, guaranteed in expectation.

Adversarial inputs share the channel. Every document the agent reads, every tool response it parses, every user message lands in the same window as the system prompt. There is no privileged layer. Input that statistically reads as “the user wants this destructive action” can override the instruction that says don’t. Prompt injection is the named version. The unnamed version is conversation drift.

Each new session starts fresh. If the guardrail lives in the prompt, every session re-establishes it from scratch.

The honesty-suppression test in the corruption piece is the smallest reproducible demonstration. A single banned word, surfaced through every channel the harness exposes (CLAUDE.md, skills, system reminders, project memory), still leaks. Every more complex guardrail violates at a higher rate.

What lives outside the prompt

A guardrail is something the agent cannot remove by re-reading its instructions. Six pieces, each catching a different failure class.

-

Scoped identities with minimum-necessary credentials. The agent has its own database role, service account, and API keys. The role grants the minimum permission the task requires: read-only by default, explicit grants on the narrow write paths it actually needs. Revocation is one

DROP ROLEaway. The agent cannot escalate by rephrasing its own context, because the credentials live in a layer the model cannot read. -

MCP and tool surfaces from trusted sources only. An untrusted MCP server is a malicious tool the agent will call with the same confidence as a benign one. A public-registry MCP server from an unknown author is unsigned code from the internet, with the agent doing the executing. Trust belongs at the connection layer: allowlists, signed manifests, internal-only registries. A prompt that says “only use trusted tools” is the agent grading its own homework.

-

Harness hooks that intercept tool calls. Claude Code fires shell commands on events like

PreToolUseandPostToolUse. APreToolUsehook reads the tool name and arguments and returns allow or deny; the model never sees the decision. The pattern handles concrete bans the prompt cannot reliably enforce: blockingEditon.envorsecrets/, blockingBashagainst regexes likerm -rforDROP TABLE, blockingWriteto migration directories outside an explicit unlock. Codex’s approvals primitive is a narrower version of the same idea, and some harnesses expose nothing comparable, in which case this layer has to be built outside the harness. -

Wire-level filtering between the agent and the database. A SQL-aware proxy in front of the database parses every statement and blocks denylist matches:

DROP,TRUNCATE, unqualifiedDELETE, schema-modifying DDL outside an unlock window, queries that touch tables the agent’s role has no business reading. ProxySQL with query rules, pgBouncer with extensions, and custom proxies all sit here; commercial SQL-firewall products exist but the open-source space is thin. The same pattern applies one layer up: an MCP server or RPC layer you control exposes only validated operations rather than passing arbitrary SQL through. The agent never speaks raw SQL to production. It speaks to an interface that decides what reaches the wire. -

Structured logs with prompt provenance. Every tool call captured: input prompt, tool name, arguments, response, timestamp, agent identity. A pre-AI audit log captured the SQL and treated it as sufficient. The AI-era version captures the reasoning context that produced it, because the SQL alone is incomplete in any incident review. Corruption can surface months after the prompt that produced it.

-

Behavioral monitoring with anomaly alerts. Rate limits per agent identity. Baselines for normal call volume, tables touched, read and write volume. Alerts on threshold crossings: a 100x increase in

DELETEcalls, a sudden read of a table the agent has never touched, a write to a schema outside its usual scope. Agents are non-human users with their own behavioral baselines, and the reference frame is anomaly detection on user accounts in security tooling.

None of these lives in the context window, which means the agent cannot route around them by being asked nicely. The credentials are checked by the database. The MCP allowlist is checked by the connection layer. The hooks run in separate processes. The proxy parses every statement before it reaches the database. The logs are written by the harness. The alerts are evaluated by a separate system. Every layer is enforced by something that does not sample from a probability distribution.

Each layer is engineering work. On a small enough deployment, the cost exceeds the cost of an incident.

When this doesn’t apply

Read-only agents, with a caveat. Analytics, query, chat. A read-only role at the database is the layer for write damage, but reads are not free: every row the agent fetches is sent to the model provider as part of the next prompt. A table holding API keys, customer PII, or internal hostnames is not safe to expose to a third-party-hosted model just because the agent cannot write. Either the role’s grants exclude those tables, a wire-level filter strips sensitive columns, or the agent runs against a sanitized snapshot.

Toy environments. Playgrounds, scratch databases, demos. The whole point is that the agent can break things; guardrails are friction.

Single-operator small teams where the agent is the operator’s autocomplete. The operator is the verification layer. The agent’s permissions are intentionally equivalent to the operator’s.

Everyone else needs all six. Agents holding production credentials. Agents working against shared infrastructure. Agents wired into CI/CD. Agents reading and writing customer data.

The bigger picture

None of these layers is novel for staff engineers. Scoped credentials, vetted tool surfaces, request interception at every layer, audit logs, anomaly detection. Every one is standard practice for any system that touches production, except they tend to be applied to humans and to other software. The shift the AI era forces is treating the agent as a separate principal that needs its own version, and treating the prompt as something other than a security control.