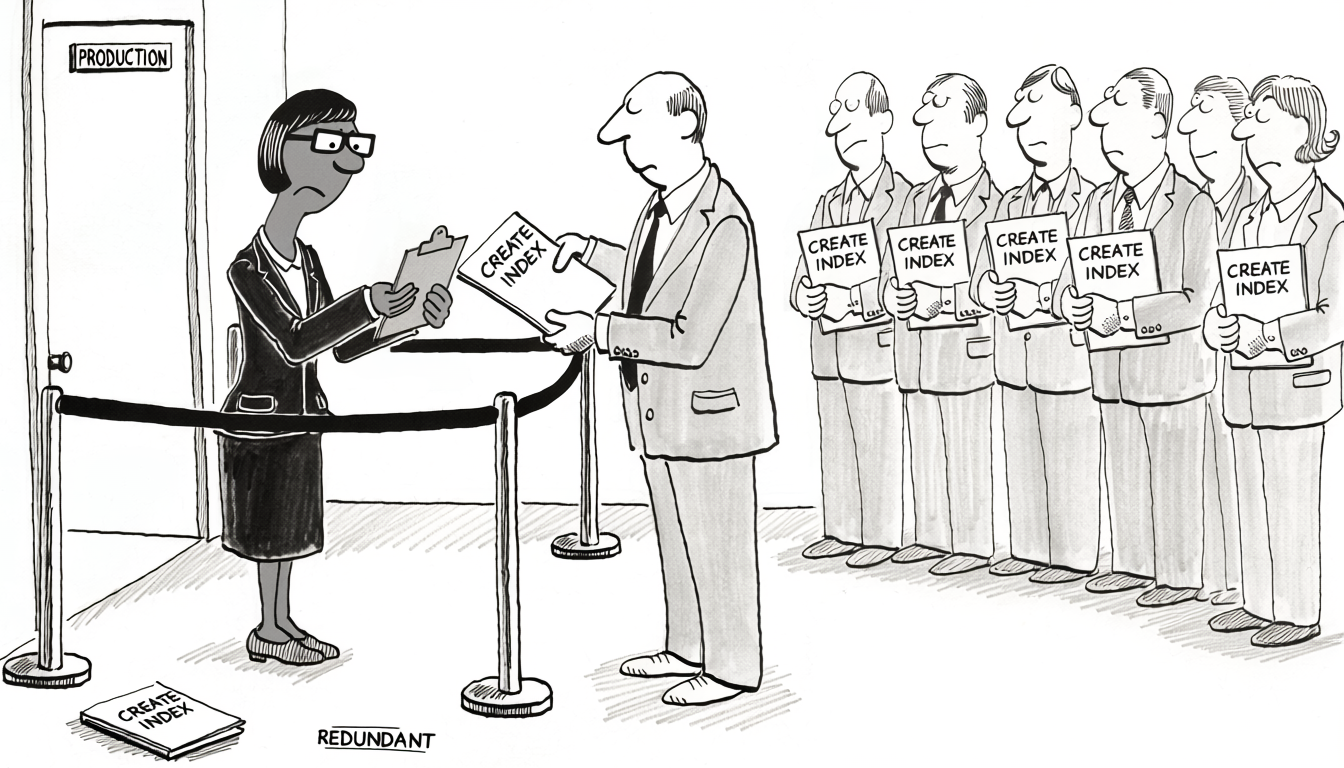

The dashboard is slow. An engineer pastes the query into the assistant and gets back a CREATE INDEX on three columns from the WHERE clause. The query drops from 800 ms to 12 ms. Ticket closed. Three weeks later a different engineer files a similar ticket against a sibling query on the same table. Same flow, different index, same satisfying speedup. Six months and a hundred sessions later, the orders table carries fourteen secondary indexes. pg_stat_user_indexes reports idx_scan = 0 on eight of them. Three are strict prefixes of larger composite indexes that already cover the same predicate. The table’s index volume now exceeds its heap volume. p99 INSERT latency has drifted from 9 ms to 31 ms over the same period, and no single deployment is responsible. Nobody added more than one index. Everyone added one.

The obvious response is “the model is bad at this, don’t let it touch indexes.” That’s half right. Any LLM is bound by the corruption floor - any proposal can quietly miss a constraint and ship a plausible-looking wrong answer, which is exactly what happens above when prefix-redundant indexes get created because the model can’t see the existing list. The model is the wrong variable to focus on. What does fix the loop is two pieces of work the SME owns: surface the context the model needs to make a grounded proposal, and build the guardrails that catch the residual errors before they ship. With both, AI does the bookkeeping a careful human would do, faster. With neither, the dashboard scenario above is the steady-state behavior.

Why the catalog isn’t enough

Indexes are a workload property. The catalog is a schema property. The mismatch is what every AI-driven index mistake comes from when nothing has been built to bridge it.

A schema describes columns, types, and constraints. It says nothing about how the table is read, in what proportions, with what selectivity, or how often each predicate fires under production load. The two pieces of evidence that matter most for any index decision live entirely outside the catalog: the workload itself (slow-query log, pg_stat_statements, the application’s actual query mix) and the planner’s recorded behavior (pg_stat_user_indexes, pg_stat_user_tables). An assistant reading the catalog sees neither. It sees the schema, the one query in the prompt, and a vague sense from training data of what indexes “tend to” exist on tables that look like this one.

That gap explains the failure modes. Without the existing index list, the assistant proposes (customer_id, created_at) when (customer_id, status, created_at) already exists and serves the same predicate as a left-prefix match. Without selectivity statistics, the assistant orders composite columns by the order they appeared in the WHERE clause, producing indexes whose leading column has 4 distinct values across 50M rows. Without the write/read ratio, every proposal is implicitly priced as free on the write path.

Each session also starts fresh. There’s no continuity between the assistant that proposed idx_orders_status_created last quarter and the one being asked the same question today. A reviewer six months ago tried that exact index, found the planner ignored it because of correlated columns, and removed it. The next session has no record of any of that and proposes it again.

The lifecycle is asymmetric. AI is asked to make slow queries faster - a question that resolves with a CREATE. AI is rarely asked to make the index set smaller, because nobody files a ticket for “we have too many indexes” until something is on fire. Every interaction nudges the count up; nothing in the loop nudges it back down.

All of these gaps have the same shape: information that exists somewhere outside the catalog, and that the assistant has no path to unless something puts it there.

What every new index actually costs

The default mental model is “an index makes reads faster, what’s the harm.” The harm is real and shows up across every layer the database touches.

Every INSERT, every UPDATE on an indexed column, and every DELETE updates every relevant index. A row written to a table with nine indexes is nine extra B-tree descents, nine page pins, nine potential page splits, and nine WAL records. The per-row write cost grows roughly linearly with the index count, and an UPDATE that migrates the row touches every index when HOT can’t apply.

WAL volume is what replicas consume, so the same cost replays on every standby and shows up as replication lag under load. A write-heavy workload with a redundant index set can saturate the replication channel before it saturates the primary’s local disk, and the failure mode reads as “the replicas are falling behind” rather than “we have too many indexes.”

Indexes occupy buffer-pool pages that would otherwise hold hot heap data. A table with twice the index volume has roughly half the cache headroom for the heap. Backup size, restore time, and vacuum I/O all scale with total index volume, not heap volume. On most production systems, index volume already exceeds heap volume.

There’s also a hidden second-order effect that the local optimization framing misses. Index choice changes the planner’s decisions for other queries. An index that helps query A by a measured 50 ms can shift the plan for query B onto a worse path costing 500 ms on a code path nobody’s currently watching. The session adding index A has no visibility into this. The regression surfaces a week later as “query B got slower,” the on-call engineer reaches for the assistant, and another index gets proposed for query B. The cycle repeats.

Every new index is a permanent write tax paid on every transaction, in exchange for a read benefit on a subset of queries. The math only works when the read benefit is real and large enough to matter, the index is actually used by the planner under production statistics, and no existing index could have served the workload through extension or column reordering. Establishing those three points is exactly what the system around the assistant exists to do.

Most slow queries aren’t index problems

Before the system is reached for, the framing the assistant defaults to is “slow query → CREATE INDEX.” That collapses a much larger decision tree into the move with the highest permanent cost. Four cheaper interventions usually exist, and at least one of them resolves the slowness without adding anything to the catalog.

The query itself can be wrong. A predicate wrapped in a function (WHERE LOWER(email) = ?, WHERE DATE(created_at) = ?) is non-sargable and won’t use any regular index, so adding one accomplishes nothing. The fix is rewriting the predicate or fixing the column’s collation. Non-SARGable predicates covers the catalogue. The same shape applies to implicit type casts on join columns, OR predicates that defeat composite indexes, and OFFSET-based pagination that gets quadratically slower as the offset grows.

The application can change access pattern. List views that paginate with LIMIT/OFFSET past page 50 belong on keyset pagination, where the client passes the last seen (created_at, id) tuple and the query becomes a sargable range scan against an existing index. Sorting that the database is doing on a non-indexed column for a result set the client only ever reads twenty rows of can move client-side. Aggregations that fire on every page load can be cached or pre-computed by the application, removing the read pressure entirely rather than indexing around it. The pattern across all three: the slowness is a property of how the application is asking, not of what the database has indexed.

The statistics can be stale. The planner can have the right index already and refuse to use it because pg_stats thinks the predicate matches 80% of the table when it actually matches 0.1%. ANALYZE is the first move on any “the planner won’t use the index” complaint, and on correlated columns the fix is CREATE STATISTICS (Postgres) or extended histograms (MySQL), not another index.

The schema can be the actual problem. A JSON column the workload filters on every read is paying the JSON-extract cost on every row no matter what indexes are added on the side; the durable fix is promoting the queried keys to typed or generated columns. A VARCHAR column carrying numeric IDs forces an implicit cast on every lookup that no index can rescue. A polymorphic resource_id column whose target depends on a sibling discriminator can’t be indexed in a way the planner uses for the conditional join the application actually wants.

The assistant’s default skips all four because the catalog doesn’t surface any of them. A model prompted with the diagnostic ladder explicitly will work through it; a model prompted with “this query is slow, what should we do” will reach for the index. The difference is whether the prompt was constructed by a system that knows the ladder exists.

What the SME builds: context

The first half of the SME’s work is the context system - what the model sees in the prompt, and what persists between sessions. Five surfaces, each closing a specific gap the catalog leaves open.

Each index has a documented purpose. PostgreSQL supports COMMENT ON INDEX; MySQL supports an INDEX ... COMMENT clause on creation. Use either, and put a one-line description of which query the index serves and why the column order is what it is. Naming conventions carry the same load - idx_orders_dashboard_list_v2 is more legible than idx_orders_status_created_customer. Both surfaces live in information_schema, so any tool reading the catalog picks them up.

History is persisted somewhere queryable. A migration log, a docs/indexes/<table>.md file, or a comment block on the table itself, recording what was tried and dropped. “We tried (status, created_at) in 2025-Q3, the planner ignored it because of correlated columns, removed in migration 0142” is the cheapest way to keep the next session - any session, six months from now - from proposing the same dead end.

Workload evidence goes into the prompt. A slow-query log entry, an EXPLAIN ANALYZE against representative data, the current pg_stat_statements count for the query, and a snapshot of pg_stat_user_indexes for the table. The artifacts ground the proposal in real workload rather than a hypothetical, and they’re exactly the evidence the model can use if it’s handed in.

The existing-index dump goes into the prompt. Before any proposal, dump the current indexes on the table and the indexes the planner has been using for nearby queries. The dump catches redundancy (proposed index is a left-prefix of an existing composite) and supersession (the existing composite would serve the new query if its column order were tweaked or an INCLUDE clause were added). A model handed the dump routinely catches this; a model not handed it routinely misses it.

Hypothetical indexes go first. PostgreSQL’s HypoPG creates a fake index, the planner costs it as if it existed, and EXPLAIN reports whether it would be used. The cost is zero, and the signal is whether the proposed index would actually change the plan under current statistics. MySQL has no direct equivalent; the discipline there is to validate the proposal against a recent production snapshot before merging.

What the SME builds: guardrails

The context system gets the model to a grounded proposal. The guardrails catch what the model still gets wrong, the same way tests on database code catch regressions a thoughtful human can produce.

Performance regression tests on hot queries. For each query the team cares about, write a test that runs EXPLAIN and asserts the plan uses the expected index, or stays under a row-count budget, or doesn’t fall back to a sequential scan. Run on every migration. The test catches “AI added an index that shifted the planner onto a worse path for query B” - a class of failure that’s invisible at code review time.

Catalog-redundancy linter. Block CI when a migration adds an index that’s a strict left-prefix of an existing composite, or a single-column index that duplicates a leading column of one. The check is mechanical, the rule fits in a small SQL query against pg_index, and it catches the most common AI failure mode without any human in the loop.

Drop-safety check. Before any DROP INDEX lands, the check confirms idx_scan has been zero for N days and the index’s comment doesn’t flag it as kept for a known non-daily workload. The check fails loud and the migration doesn’t run. This is what the comment-on-index discipline above pays back: the comment is the data the check reads.

Lock-budget guards. Block DDL that would take ACCESS EXCLUSIVE on tables tagged as hot, unless the migration uses CONCURRENTLY (Postgres) or the equivalent online algorithm (MySQL). Catches “AI proposed CREATE INDEX without CONCURRENTLY on a 500M-row table” before it reaches production.

Continuous index-health monitoring. Workloads shift constantly - queries get removed from the application, access patterns change, table sizes grow past the point where a once-useful index stops mattering, a deploy reroutes the planner to a different index. None of those surface in the catalog. A long-running monitor watches pg_stat_user_indexes, pg_stat_statements, and write-path latency, and fires when a previously-hot index’s scan count flatlines, when its write-cost-to-read-benefit ratio crosses a threshold, or when the planner walks away from an index that hot queries used to depend on. Each is a separate alert. The corruption-floor failure that survives every other check usually shows up here first, as a metric change.

When a monitor fires, the alert is a context-gathering job the model is well-suited for. The LLM pulls the index’s comment, the migration history, the documented purpose, recent pg_stat_statements data, and any related queries from across the catalog, and produces a summary - what the index was meant to serve, what the data shows about its current usage, and a proposed disposition. The SME reads the summary and makes the call. The drop-safety check above is the floor underneath the call: even if the SME approves the drop, the migration doesn’t run if the comment flags a known non-daily workload.

The honest trade-off is that this isn’t free. Building the context surface and the guardrails up front is real work, and on a small or short-lived database it’s overkill. The work pays back when the system is large enough that no human reliably has the whole picture, the workload changes faster than any one person can track, and AI is being used in the loop. At that scale, every component above is cheaper than the failure it prevents.

When the discipline isn’t worth the friction

The system earns its cost on production OLTP databases with multiple writers, sustained traffic, and a year or more of accumulated drift ahead. It’s overkill in three places.

OLAP and columnar workloads work differently - ClickHouse, DuckDB, and BigQuery don’t carry the same per-row write tax, and the article’s mental model doesn’t transfer. Very small tables don’t repay the discipline either; a 5,000-row admin table with a dozen lookup indexes is using a few megabytes of cache and adding microseconds to writes that aren’t on any hot path. Single-writer workloads with a small, enumerable query set are the third case: a reporting database serving twenty known queries from one ingestion job has an index set that can be designed up front and reviewed by hand. The system pays for itself when the query mix is large enough that no human reliably has the whole picture.

The bigger picture

The recurring pattern across self-documenting schemas, foreign keys, and column comments is that the work of making AI useful on a production database is the same work that makes the database legible to any reader. Indexes are the same shape one level up. An index whose comment explains its purpose, whose history is recorded, and whose usage shows up in a regression test that runs on every migration is an index a human or an assistant can reason about safely. An index named idx_orders_status_created_customer_3 with no comment, no recorded history, and no test asserting which query depends on it is an index neither can reason about, and the failure mode is the same in both cases.

The SME’s role in an AI-assisted database is the work AI doesn’t do: build the context surface, and build the guardrails that catch the corruption-floor errors the model still produces against the best context. The model proposes. The system the SME built is what makes those proposals safe to ship.